Essential Points

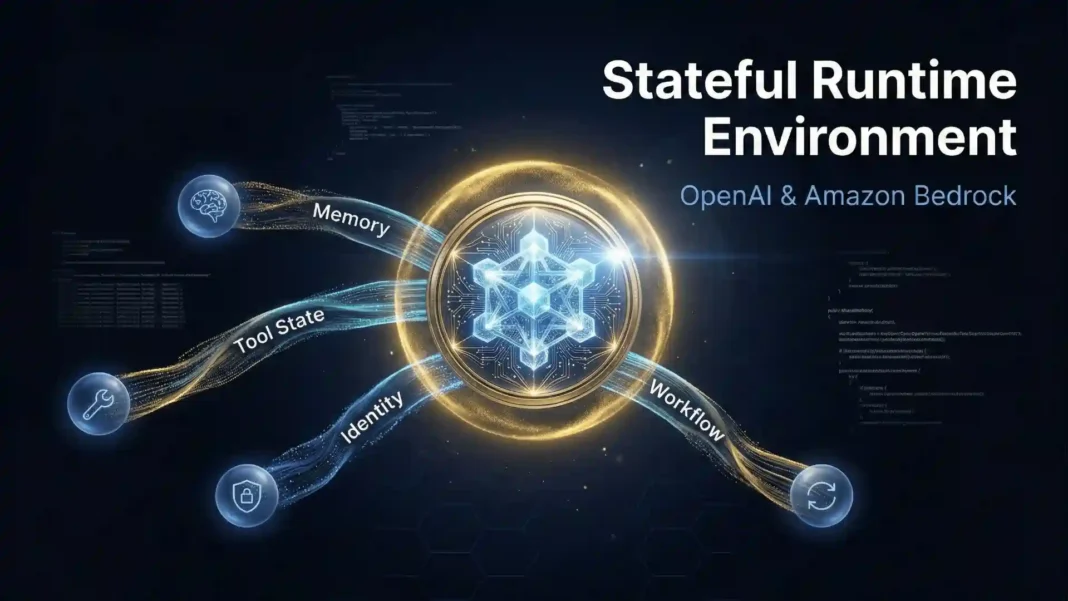

- OpenAI and AWS jointly developed the Stateful Runtime Environment, available natively through Amazon Bedrock, announced February 26, 2026

- Agents automatically carry forward memory, tool state, workflow history, and identity permission boundaries across multi-step tasks

- The runtime runs inside the customer’s own AWS environment, aligning with existing security, governance, and infrastructure controls

- General availability is expected within the next few months; enterprise teams can contact OpenAI or AWS now for early access

Most enterprise AI agent projects stall not because the model fails to reason, but because the infrastructure around it cannot hold a thought. OpenAI and Amazon have directly addressed this with the Stateful Runtime Environment, jointly developed to run natively inside Amazon Bedrock powered by OpenAI’s GPT models. This is not a minor API update. It changes the foundational architecture of how production-grade agents are built and deployed at scale.

Why Stateless Agents Break Under Real Workloads

A lot of agent prototypes built on stateless APIs handle simple use cases well: one prompt, one answer, maybe one tool call. Production work is fundamentally different. Real workflows unfold across many steps, require context from previous actions, depend on multiple tool outputs, and need trusted guardrails inside secure environments.

Stateless APIs place the entire orchestration burden on the development team. Teams must independently figure out how state is stored, how tools are invoked, how errors are handled, and how long-running tasks resume safely when interrupted. The result is fragile, custom-built scaffolding that grows in complexity alongside the workflow it supports.

What the Stateful Runtime Environment Actually Does

The Stateful Runtime Environment runs inside your AWS environment and is optimized to work with AWS services. This is a critical architectural distinction. Context, permissions, and workflow state never leave the customer’s AWS boundary, which directly supports compliance and security posture requirements.

Amazon CEO Andy Jassy described the developer value precisely: “If you’re an AI application developer, you don’t want to start from scratch every time you’re actually using models. Being able to access state, whether it’s memory or identity, or being able to call tools or call out to compute, being able to do that in a stateful way where we’re together training those, that Stateful Runtime Environment on AWS’s infrastructure, there’s nothing else like that today.” The runtime is jointly trained to run optimally on AWS infrastructure and integrates with Amazon Bedrock AgentCore and infrastructure services so agents operate cohesively with the rest of the customer’s applications running in AWS.

The Four Pillars of Stateful Execution

Instead of manually stitching together disconnected requests, agents in the Stateful Runtime automatically execute complex steps with working context that carries forward four things:

- Memory and history: The agent retains conversation context and prior outputs across every step without manual re-injection

- Tool and workflow state: Tool calls, pipeline checkpoints, and API responses persist so the agent knows exactly what has already been executed

- Environment use: The agent maintains awareness of which systems it has accessed and what actions it has completed

- Identity and permission boundaries: Access controls and governance rules travel with the agent across every step, keeping it within its authorized scope

When the runtime handles persistent orchestration and state across steps, development teams focus on workflow logic and business outcomes instead of infrastructure scaffolding.

Use Cases That Now Become Viable at Scale

OpenAI identifies four specific workflow categories where the Stateful Runtime removes previously hard barriers:

Multi-system customer support requires an agent to pull account data, check inventory, log a case, and send a confirmation without losing context between those steps. Stateful execution handles the full chain autonomously.

Sales operations workflows involve lead qualification, CRM updates, follow-up scheduling, and handoff to human reps at the right moment. The runtime maintains continuity across every stage of that process.

Finance processes with approvals and audits demand that every action be logged, every approval be traceable, and every step be resumable if interrupted. The runtime’s identity and permission persistence makes compliant execution the default behavior.

Internal IT automation requires agents that know which tickets they have touched, which systems they have already queried, and which escalations are pending. The runtime delivers that continuity across sessions.

The OpenAI and AWS Strategic Partnership

The Stateful Runtime is part of a broader OpenAI and Amazon strategic partnership announced on February 26, 2026. Andy Jassy noted that AWS now has two of the largest AI labs significantly betting on Trainium, referring to both OpenAI and Anthropic. The runtime will be trained to run optimally on AWS infrastructure, meaning its performance is not generic. It is tuned for the specific hardware and service architecture customers already run in AWS.

Jassy was direct about the significance: “We have lots of developers and companies eager to run services powered by OpenAI models on AWS, and our unique collaboration with OpenAI to provide stateful runtime environments will change what’s possible for customers building AI apps and agents.”

What Development Teams Should Do Now

The Stateful Runtime in Amazon Bedrock is not yet generally available as of late February 2026. Teams planning production agent deployments in the coming months should prepare now.

Three immediate actions apply:

- Audit your current orchestration layer. Identify which custom state management components the runtime will replace. Removing that scaffolding reduces technical debt and long-term maintenance cost.

- Review Amazon Bedrock AgentCore documentation. The runtime integrates with AgentCore’s memory, tool invocation, and runtime host layers. Familiarity with that architecture shortens adoption timelines.

- Contact your OpenAI team or AWS account representative. Early access exploration is available now for enterprise teams that reach out. First movers gain implementation support and direct influence over feature priorities before general release.

Limitations and Considerations

The Stateful Runtime Environment is currently designed and optimized specifically for AWS-native deployments. Organizations operating hybrid or multi-cloud architectures will need to evaluate integration requirements if their agents span multiple cloud environments. The runtime is in pre-launch status as of the announcement, which means documentation, regional availability, and pricing details are not yet published. Teams should treat current planning as preparatory and confirm specifics with OpenAI or AWS directly before committing to architecture decisions.

Perplexity Computer Is the General-Purpose AI Worker That Handles Entire Projects, Not Just Prompts

Perplexity Computer Is the General-Purpose AI Worker That Handles Entire Projects, Not Just Prompts

Frequently Asked Questions (FAQs)

What is the OpenAI Stateful Runtime Environment in Amazon Bedrock?

It is a jointly developed execution layer by OpenAI and AWS that runs OpenAI’s GPT models natively inside Amazon Bedrock. It gives AI agents persistent working context including memory, tool state, workflow history, and identity permissions across multi-step tasks, removing the need for developers to build custom orchestration infrastructure.

How is a stateful agent different from a stateless agent?

A stateless agent treats every API call as a fresh start with no memory of prior steps or context. A stateful agent carries forward its full working context including completed tool calls, prior outputs, and permission boundaries, enabling it to execute complex multi-step workflows reliably without manual re-injection of context at every step.

When will the Stateful Runtime Environment in Amazon Bedrock be available?

General availability is expected within the next few months from the February 26, 2026 announcement. Enterprise teams can contact their OpenAI representative or AWS account manager now to explore readiness and request early access.

What AWS services does the runtime integrate with?

The Stateful Runtime integrates with Amazon Bedrock AgentCore and AWS infrastructure services, allowing agents to run cohesively with the rest of an organization’s applications already operating in AWS. It is trained to run optimally on AWS infrastructure including Trainium chips.

What enterprise workflows benefit most from stateful agents?

OpenAI specifically identifies multi-system customer support, sales operations workflows, internal IT automation, and finance processes requiring approvals and audits. These workflows share a common requirement: context must persist reliably across many steps, systems, and time intervals.

Does the Stateful Runtime work with existing AWS security and compliance controls?

Yes. The runtime runs inside the customer’s AWS environment and is designed to operate within the existing security posture, tooling integrations, and governance rules already in place. Compliance alignment is a built-in design objective, not an add-on.

What is the significance of the OpenAI and Amazon strategic partnership for developers?

The partnership makes OpenAI’s GPT models available through Amazon Bedrock with stateful execution optimized for AWS infrastructure. Developers gain access to frontier model intelligence without sacrificing the security, governance, and infrastructure continuity of their existing AWS environment.