Quick Brief

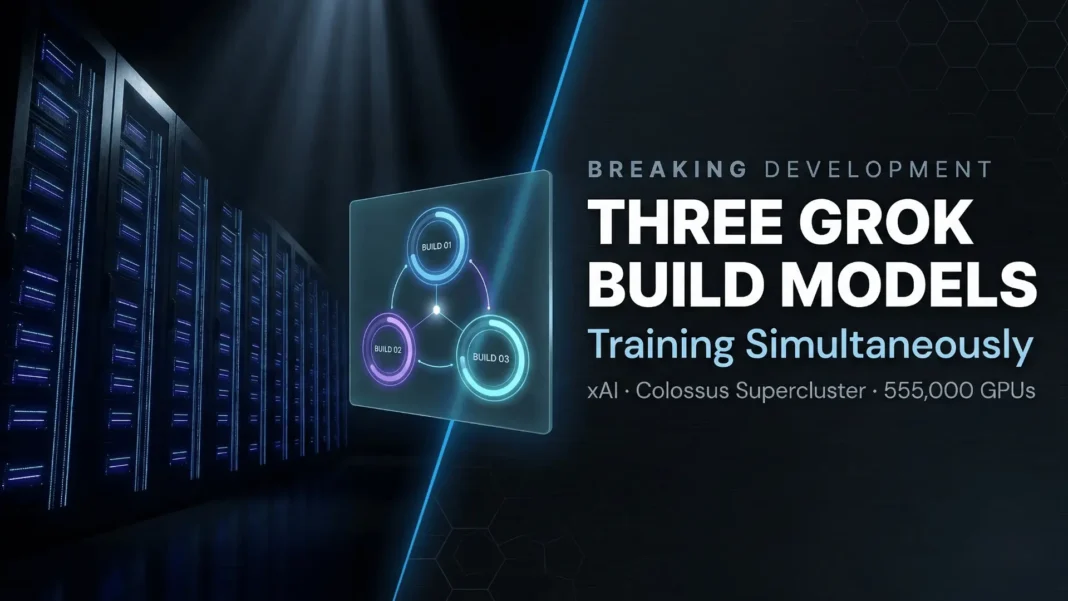

- Elon Musk confirmed via X on March 16, 2026 that three Grok Build models will train simultaneously by this weekend

- xAI’s Colossus supercluster now houses 555,000 NVIDIA GPUs across Memphis facilities at 2 gigawatts total capacity

- xAI simultaneously acknowledged structural problems, with Musk stating the company “was not built right the first time”

- Tesla’s Terafab chip project launches March 21, 2026, the same weekend, and is designed to expand xAI’s compute independence

Elon Musk confirmed on March 16, 2026 that xAI will have three Grok Build models in simultaneous training by this weekend, a technical milestone that reveals the scale of infrastructure xAI has assembled in Memphis. This announcement lands in the same week Musk publicly admitted xAI “was not built right the first time,” creating a striking contrast between infrastructure ambition and internal restructuring. Both realities matter for anyone tracking where Grok is headed.

What “Grok Build Models” Actually Means

Not all Grok models serve the same purpose. The “Build” designation within xAI’s pipeline refers to models optimized for developer and infrastructure use, distinct from the consumer-facing Grok assistant available on X.

Running three Build models simultaneously means xAI can test different architectural hypotheses, training data mixtures, and capability targets in parallel rather than sequentially. Sequential training is slower and more conservative. Parallel pipelines allow labs to compress iteration timelines by running concurrent experiments against different optimization targets.

The Infrastructure Behind Three Simultaneous Runs

Training a frontier AI model is not a weekend task. A single large-scale training run can consume thousands of GPU-hours and cost millions of dollars. Running three at once requires not only massive compute capacity but also sophisticated orchestration infrastructure.

xAI’s Colossus supercluster in Memphis now houses 555,000 NVIDIA GPUs, including GB200 and GB300 Blackwell units, across multiple buildings operating at approximately 2 gigawatts total capacity, making it the world’s largest single-site AI training installation as of January 2026. The cluster started in mid-2024 with 100,000 H100 GPUs and underwent continuous expansion throughout 2025, with the first batch of 550,000 GB200s and GB300s going live at Colossus 2 in July 2025. Three simultaneous Build-level training runs are a direct product of that infrastructure scale.

Tesla Terafab Launches the Same Weekend

The timing of this training announcement is not coincidental. On March 14, 2026, Musk posted on X that the “Terafab Project launches in 7 days,” pointing to March 21, 2026 as the launch date for Tesla’s vertically integrated chip fabrication effort.

Terafab is designed to give xAI a self-sufficient compute supply chain. Industry analysts have estimated that controlling chip production end-to-end could reduce AI training costs by up to 40% while improving delivery timelines. For xAI’s parallel training ambitions, Terafab provides a long-term foundation that reduces dependence on external GPU procurement at scale.

xAI’s Structural Rebuild Is Happening Simultaneously

The parallel training announcement cannot be read in isolation. On March 12, 2026, Musk stated on X that “xAI was not built right first time around, so is being rebuilt from the foundations up.” The same day, The Information reported that xAI hired two senior engineers from Cursor, Jason Ginsberg and Andrew Milich, to lead a rebuild of Grok’s coding product.

The specific trigger for the restructuring was Grok’s coding product falling behind Anthropic’s Claude Code and OpenAI’s Codex on benchmarks that matter to professional developers. Grok Code Fast 1 achieved 70.8% on SWE-Bench Verified, a functional but trailing result against competitors. Ten of xAI’s twelve original co-founders have now departed.

What This Means for Developers and Businesses

For developers currently building on the Grok API, two things are happening in parallel: infrastructure capacity is expanding significantly, and the team responsible for the coding product has been replaced. These are not contradictory signals. They suggest xAI is investing heavily at the infrastructure layer while acknowledging product-level gaps that require new leadership to close.

Musk has stated xAI expects to “catch up and exceed competitors” in coding by mid-2026. For Indian and US businesses evaluating AI APIs, xAI’s cost-per-token pricing remains significantly lower than OpenAI at the fast-tier level, making it worth tracking closely as model quality improves. Watch the xAI developer blog and X.AI API changelog for capability announcements tied to this training batch over the next 60 to 90 days.

Limitations and Open Questions

xAI has not disclosed specific parameter counts, training data sources, or target benchmarks for the three Build models currently in training. Without that detail, it is not possible to assess whether these represent frontier-scale runs or mid-tier experimental models. The announcement from Musk is a directional signal, not a technical specification.

Additionally, simultaneous training does not guarantee simultaneous or rapid release. Training completion and public deployment are typically separated by months of evaluation, fine-tuning, and safety testing. The internal restructuring also introduces execution risk at a moment when xAI is simultaneously rebuilding its core coding product team.

Frequently Asked Questions (FAQs)

What are Grok Build models?

Grok Build models are xAI’s developer-focused AI variants, optimized for API use cases including coding, tool integration, and enterprise workflows. They are distinct from the consumer Grok assistant on X and form the technical backbone of xAI’s developer platform.

Why is xAI training three models at the same time?

Parallel training allows xAI to test different architectures and optimization targets simultaneously rather than sequentially. This compresses development timelines and allows the lab to fast-track whichever model performs best, without waiting months for sequential runs to complete.

How large is xAI’s Colossus supercluster in 2026?

As of January 2026, Colossus houses 555,000 NVIDIA GPUs, primarily GB200 and GB300 Blackwell units, across multiple Memphis buildings operating at approximately 2 gigawatts total power capacity. xAI has confirmed a target of 1 million GPUs total, with the Memphis site currently at 55% of that goal.

What is the Tesla Terafab project and how does it relate to xAI?

Terafab is Tesla’s vertically integrated chip fabrication initiative, scheduled to launch March 21, 2026. It is designed to supply AI chips for Tesla’s autonomous vehicle programs and provide xAI with a self-sufficient compute supply chain independent of external GPU suppliers.

Why did Musk say xAI “was not built right”?

On March 12, 2026, Musk publicly stated that xAI’s coding product had structural problems and was not competitive against Anthropic’s Claude Code and OpenAI’s Codex. He hired two senior Cursor engineers to lead a rebuild and pledged to catch up with competitors in coding by mid-2026.

When will the new Grok Build models be released publicly?

No public release timeline has been confirmed. Training completion typically precedes public deployment by several months due to evaluation, safety testing, and fine-tuning. Developers should monitor the xAI developer blog and X.AI API changelog for announcements.

Is xAI’s Grok API cheaper than OpenAI?

Yes. At the fast-tier level, Grok’s API pricing is significantly lower than OpenAI’s per-token costs as of early 2026. For cost-sensitive production deployments, this pricing advantage makes xAI worth evaluating, particularly if model quality improves from the current training batch.

What should developers building on Grok API do right now?

Benchmark your current Grok API performance on key use cases and document baselines now. When xAI releases models from this training batch, established benchmarks will allow rapid, evidence-based decisions on whether to upgrade integrations or shift workloads.