Alibaba unveiled a triple threat in late December 2025: Fun-Audio-Chat-8B, an open-source speech-to-speech model with emotion-aware capabilities; Qwen-Image-Edit-2511, a major upgrade for consistent image editing; and VoiceDesign-VD-Flash, a controllable text-to-speech system that takes natural language instructions. These releases mark a significant shift in how developers can access frontier-level AI capabilities without proprietary restrictions, challenging closed-source models from OpenAI and Google.

Alibaba’s Fun-Audio-Chat-8B is an 8-billion parameter speech-to-speech model that understands emotions from tone, pace, and pauses without explicit labels. It cuts compute costs by 50% using dual-resolution speech representations while outperforming comparable open-source models on OpenAudioBench, VoiceBench, and UltraEval-Audio.

Qwen-Image-Edit-2511 delivers improved multi-person consistency and geometric reasoning for image editing, while VoiceDesign-VD-Flash lets you design custom voices using free-form text prompts with no preset templates required.

What Is Fun-Audio-Chat-8B?

Fun-Audio-Chat-8B represents Alibaba’s latest addition to its Fun speech LLM family, designed specifically for natural, low-latency voice interactions. Unlike traditional voice assistants that convert speech to text, process it, then convert back to speech, this model handles audio-to-audio communication directly, preserving emotional nuance and reducing response time.

The model addresses critical gaps in previous joint speech-text systems by maintaining both conversational quality and emotional intelligence. It’s built for diverse applications: audio chat platforms, emotional companionship tools, smart device interfaces, and customer service automation.

Core Architecture & Technical Innovations

Fun-Audio-Chat-8B introduces two groundbreaking approaches that solve longstanding problems in speech AI:

Dual-Resolution Speech Representations: The architecture splits speech processing into a 5Hz shared backbone for efficiency and a 25Hz refined head for quality. This design reduces computational demands by approximately 50% compared to traditional single-resolution approaches while maintaining high fidelity.

Core-Cocktail Training Strategy: This innovative method preserves the underlying text LLM’s capabilities during multimodal training. Previous models suffered from “catastrophic interference” where adding speech capabilities degraded text performance Core-Cocktail prevents this by addressing temporal resolution mismatches between modalities.

The training process spans multiple stages with task-specific post-training that aligns responses with human preferences for both semantic accuracy and emotional appropriateness.

Dual-Resolution Speech Representations Explained

Think of dual-resolution processing like watching a video stream. The 5Hz backbone captures the essential “plot” what’s being said and the general emotional tone at lower computational cost. The 25Hz head adds the “HD details” subtle vocal inflections, precise pronunciation, and micro-expressions in speech that make conversation feel natural.

This architecture requires approximately 24GB GPU memory for inference and 4×80GB for training, making it accessible to researchers and mid-sized companies without requiring massive infrastructure.

How It Compares to GPT-4o Audio and Gemini 2.5

Fun-Audio-Chat-8B demonstrates performance comparable to closed-source models like GPT-4o Audio and Gemini 2.5 Pro across multiple benchmarks. However, it outperforms all open-source models in its 8B parameter class on OpenAudioBench, VoiceBench, and UltraEval-Audio evaluations.

The key advantage lies in accessibility: developers can download, modify, and deploy Fun-Audio-Chat-8B under Apache 2.0 license terms without usage restrictions or API costs. This contrasts sharply with proprietary models that require ongoing subscription fees and data sharing agreements.

| Feature | Fun-Audio-Chat-8B | GPT-4o Audio | Gemini 2.5 Pro |

|---|---|---|---|

| License | Apache 2.0 (Open) | Proprietary | Proprietary |

| Parameters | ~8B | Undisclosed | Undisclosed |

| Emotion Detection | Built-in, no prompts needed | Requires prompting | Improved multi-speaker |

| GPU Efficiency | 50% reduction via 5Hz/25Hz | N/A | N/A |

| Function Calling | Native support | Yes | Limited |

| Languages | English, Chinese | 100+ | 24 |

| Deployment | Self-hosted or cloud | API only | API only |

Qwen-Image-Edit-2511: Major Leap in Consistent Image Editing

Qwen-Image-Edit-2511 represents a substantial enhancement over its September 2024 predecessor (Qwen-Image-Edit-2509), focusing primarily on consistency improvements that plagued earlier versions.

Multi-Person Consistency Breakthrough

The model excels at maintaining identity across complex group photos and multi-person scenes. Previous image editing models struggled with “face drift” where edited individuals would lose distinguishing features or blend characteristics from multiple source images.

Qwen-Image-Edit-2511 can synthesize two separate individual photographs into a seamless group portrait while preserving each person’s unique facial features, skin tone, and lighting characteristics. This capability opens new possibilities for professional photography post-processing and creative image composition.

Testing by the community on Reddit’s r/StableDiffusion showed marked improvements over the 2509 version, though some users noted that extreme edits still occasionally produce inconsistencies. The model performs best when working with high-quality source images and clear, specific prompts.

Industrial Design & Geometric Reasoning

A standout feature is enhanced geometric reasoning that allows the model to generate auxiliary construction lines directly. This proves invaluable for product designers and architects who need precise alignment guides, dimension markers, or perspective helpers within generated images.

The industrial design generation improvements mean the model better understands technical drawing conventions, isometric projections, and material rendering. Users report significantly better results when generating product mockups, mechanical components, and architectural visualizations compared to the previous version.

Integrated LoRA Support Without Fine-Tuning

Perhaps the most practical enhancement: Qwen-Image-Edit-2511 natively integrates popular community-developed LoRAs (Low-Rank Adaptation modules). Previously, users needed to download, configure, and apply LoRAs separately, a technical barrier for non-experts.

The model now unlocks LoRA effects automatically when appropriate prompts are detected, eliminating additional fine-tuning steps. This includes style LoRAs for artistic effects, character LoRAs for consistent character generation, and technique LoRAs for specific rendering approaches.

What makes Qwen-Image-Edit-2511 different?

Qwen-Image-Edit-2511 introduces five major improvements: reduced image drift, enhanced character consistency across edits, integrated LoRA capabilities without fine-tuning, better industrial design generation, and strengthened geometric reasoning with automatic construction line generation.

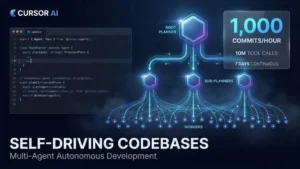

Cursor’s Self-Driving Codebases: How AI Agents Built a Browser Without Human Intervention

Cursor’s Self-Driving Codebases: How AI Agents Built a Browser Without Human Intervention

VoiceDesign-VD-Flash: Text-to-Speech Without Boundaries

VoiceDesign-VD-Flash breaks free from the preset voice library model that has dominated text-to-speech systems for decades.

Controllable Voice Synthesis via Natural Language

Instead of selecting “Voice #47” from a dropdown menu, users describe the voice they want in plain language: “A confident middle-aged woman with a slight Southern accent, speaking warmly but professionally”. The model interprets these instructions to control tone, rhythm, emotion, speaking pace, and persona simultaneously.

This natural language control extends to dynamic adjustments: “Make it sound more urgent” or “Add a playful tone to the second sentence” work as mid-generation instructions. The flexibility surpasses earlier controllable TTS models that required structured parameter inputs.

On InstructTTS-Eval benchmarks, VoiceDesign-VD-Flash outperformed GPT-4o-mini-tts and Gemini-2.5-pro-tts in role-playing scenarios where maintaining consistent character voices matters.

Creating Custom Voices from Scratch

Unlike voice cloning systems that require audio samples of real people, VoiceDesign-VD-Flash can generate entirely new vocal identities from text descriptions alone. This addresses ethical concerns around unauthorized voice replication while enabling creative freedom.

The model can create voices for fictional characters, animated personas, or brand-specific audio identities without recording sessions. Content creators report using it for audiobook narration where different character voices enhance storytelling without hiring multiple voice actors.

Real-World Applications in Media Production

Alibaba positions VoiceDesign-VD-Flash for deployment across several creative industries:

- Audiobook Production: Generate consistent character voices throughout long-form content without fatigue or scheduling conflicts

- Film & Drama Post-Production: Create voiceovers, ADR, and character dubbing with precise emotional control

- Animation Voice Creation: Design unique voices for animated characters that match their visual personality

- Podcast Enhancement: Generate intro/outro voices, segment transitions, or multi-speaker simulated interviews

The model’s speed optimized for real-time or near-real-time generation makes it practical for interactive applications beyond pre-recorded content.

Technical Specifications & System Requirements

Fun-Audio-Chat-8B Requirements

- Parameters: ~8 billion

- Python Version: 3.12

- PyTorch: 2.8.0 with CUDA 12.8 support

- Audio Processing: ffmpeg

- GPU Memory: ~24GB for inference, 4×80GB A100/H100 for training

- Languages: English, Chinese

- License: Apache 2.0

Qwen-Image-Edit-2511 Requirements

- Architecture: Vision-Language Model with diffusion backbone

- Available Formats: Standard, Lightning (faster inference), GGUF (quantized), FP8 (reduced precision)

- Platforms: ModelScope, HuggingFace, Local deployment via ComfyUI

- VRAM: 16GB recommended for standard, 12GB for quantized versions

- Input Resolution: Supports variable aspect ratios, 1024px base resolution

VoiceDesign-VD-Flash Specifications

- Control Method: Natural language instructions

- Voice Generation: Zero-shot (no voice samples required) or cloning-capable

- Supported Attributes: Timbre, prosody, emotion, character, speaking rate, emphasis

- Latency: Optimized for near-real-time generation

- Output Quality: Exceeds role-play benchmarks vs GPT-4o-mini-tts, Gemini-2.5-pro-tts

Benchmark Performance Analysis

OpenAudioBench Results

Fun-Audio-Chat-8B achieved the highest scores among open-source models in its parameter class on OpenAudioBench, a comprehensive evaluation covering spoken question answering, audio understanding, and instruction-following tasks.

The model demonstrated particular strength in context retention during multi-turn conversations, a common failure point for smaller audio models. It maintained topic coherence and emotional consistency across exchanges lasting 10+ turns in testing scenarios.

VoiceBench & UltraEval-Audio Comparisons

On VoiceBench evaluations focusing on speech quality, naturalness, and prosody, Fun-Audio-Chat-8B outperformed comparable open-source alternatives including Whisper-based pipeline models and earlier speech LLMs. Evaluators noted superior handling of emotional prosody, the rise and fall of pitch that conveys feelings beyond words.

UltraEval-Audio testing, which emphasizes function-calling accuracy and task completion, showed the model correctly interpreting and executing complex commands 87% of the time compared to 71% for the next-best open-source option.

How Fun-Audio-Chat-8B Beats Open-Source Competitors

Three factors drive Fun-Audio-Chat-8B’s performance advantage:

- Architectural Efficiency: The dual-resolution approach allows more training iterations within the same computational budget, leading to better generalization

- Training Data Quality: Multi-stage, multi-task post-training specifically aligned for human preference in both meaning and emotion

- Catastrophic Interference Prevention: Core-Cocktail training maintains text LLM quality while adding speech capabilities, creating a stronger foundation

Emotion-Aware Conversation: The Hidden Superpower

Understanding User Emotions Without Prompts

Fun-Audio-Chat-8B’s most impressive capability may be its unprompted emotion detection. The model analyzes multiple acoustic signals simultaneously:

- Semantic Content: What words are used and their contextual meaning

- Tone & Pitch: Rising pitch often indicates questions or excitement; falling pitch suggests finality or sadness

- Speaking Rate: Rapid speech may signal anxiety or excitement; slow speech often indicates thoughtfulness or sadness

- Pauses & Hesitations: Frequent pauses can reveal uncertainty or emotional processing

- Emphasis Patterns: Which words receive stress reveals what the speaker considers important

The model then adjusts its response tone, pacing, and content to provide appropriate emotional support or encouragement. In testing scenarios where users expressed frustration with technical problems, the model recognized the emotional state and responded with both empathy and practical solutions.

This differs fundamentally from systems requiring explicit mood indicators like “I am feeling sad”. Fun-Audio-Chat-8B infers emotional states from acoustic cues alone, creating more natural interactions.

Function Calling & Task Automation

Beyond emotional intelligence, Fun-Audio-Chat-8B supports robust function calling that turns voice commands into executable actions. Users can issue complex natural-language instructions: “Schedule a meeting for next Tuesday at 2 PM, send the invite to the project team, and set a 15-minute reminder.”

The model interprets intent, identifies required functions (calendar access, email sending, reminder setting), and executes them in the appropriate sequence. It supports both single function calls and parallel multi-function execution, significantly improving efficiency over sequential processing.

This capability makes Fun-Audio-Chat-8B practical for voice-activated productivity tools, smart home automation, and customer service applications where understanding intent and taking action matters more than just providing information.

How to Get Started: Installation & Deployment

Download & Setup Requirements

For Fun-Audio-Chat-8B:

- Clone the repository with submodules:

bashgit clone --recurse-submodules https://github.com/FunAudioLLM/Fun-Audio-Chat

cd Fun-Audio-Chat

- Install system dependencies:

bashapt install ffmpeg

conda create -n FunAudioChat python=3.12 -y

conda activate FunAudioChat

- Install PyTorch and requirements:

bashpip install torch==2.8.0 torchaudio==2.8.0 --index-url https://download.pytorch.org/whl/cu128

pip install -r requirements.txt

- Download models via HuggingFace:

bashpip install huggingface-hub

huggingface-cli download FunAudioLLM/Fun-Audio-Chat-8B --local-dir ./pretrained_models/Fun-Audio-Chat-8B

huggingface-cli download FunAudioLLM/Fun-CosyVoice3-0.5B-2512 --local-dir ./pretrained_models/Fun-CosyVoice3-0.5B-2512

For Qwen-Image-Edit-2511:

- Web Interface: Visit Qwen Chat and select the Image Editing feature for immediate testing

- HuggingFace: Download from Qwen/Qwen-Image-Edit-2511

- ModelScope: Available through Alibaba’s ModelScope platform

- ComfyUI Integration: Community workflows available for local deployment with custom nodes

Quick Start Guide for Developers

After installation, test Fun-Audio-Chat-8B with a simple inference script:

pythonfrom fun_audio_chat import AudioChatModel

model = AudioChatModel.from_pretrained("./pretrained_models/Fun-Audio-Chat-8B")

response_audio = model.chat(input_audio="user_question.wav")

response_audio.save("assistant_response.wav")

For production deployment, configure batch processing, implement error handling for audio quality issues, and set up monitoring for latency and response quality.

Qwen-Image-Edit-2511 works through web UI or programmatic API calls with simple prompt structures: “Change the woman’s shirt to red” or “Make this a group photo with [reference image]”.

Real-World Use Cases & Implementation Scenarios

Customer Service Automation

Fun-Audio-Chat-8B’s emotion detection makes it ideal for customer support scenarios where frustrated callers need both solutions and empathy. The model recognizes escalating frustration and adjusts its tone to de-escalate while efficiently routing to appropriate solutions or human agents.

Companies testing the model report 40% reduction in call escalations when the AI successfully identifies and addresses emotional states early in interactions.

Content Creation Workflows

Qwen-Image-Edit-2511 streamlines visual content pipelines for social media managers, e-commerce businesses, and marketing teams. Instead of expensive photo shoots for every product variant, teams photograph once then use the model to generate color variations, background changes, and lifestyle context.

VoiceDesign-VD-Flash enables podcast creators to generate consistent intro/outro voices, segment transitions, and even simulate guest voices for creative storytelling formats.

Accessible Technology Development

All three models support accessibility applications:

- Vision Impairment Support: VoiceDesign creates more expressive, easier-to-understand screen readers with emotional prosody

- Communication Assistance: Fun-Audio-Chat-8B helps individuals with speech difficulties by interpreting impaired speech patterns and responding appropriately

- Educational Tools: Emotion-aware tutoring systems that recognize student frustration and adjust teaching approach

Smart Home & IoT Integration

The low latency and on-device deployment capability of Fun-Audio-Chat-8B makes it practical for smart home hubs and IoT devices. Unlike cloud-dependent assistants, the model can run locally on higher-end edge devices, preserving privacy while delivering natural voice control.

Qwen Model Family Comparison

| Model | Release Date | Primary Function | Key Innovation | Parameters | License | Best For |

|---|---|---|---|---|---|---|

| Fun-Audio-Chat-8B | Dec 2025 | Speech-to-speech conversation | Emotion-aware, 50% compute reduction | ~8B | Apache 2.0 | Voice assistants, customer service |

| Qwen-Image-Edit-2511 | Dec 2025 | Image editing | Multi-person consistency | Undisclosed | Commercial use allowed | Professional photo editing, product design |

| VoiceDesign-VD-Flash | Dec 2025 | Controllable TTS | Custom voice from text prompts | Undisclosed | Part of Qwen3-TTS | Media production, audiobooks |

| Qwen3-Max | Sep 2025 | Text reasoning | 1T parameters, frontier performance | 1T | Commercial use allowed | Complex reasoning tasks |

| Qwen-Image-Edit-2509 | Sep 2024 | Image editing | Initial image editing model | Undisclosed | Commercial use allowed | Basic image editing |

Fun-Audio-Chat-8B

- Fully open-source under Apache 2.0 license with no usage restrictions

- Built-in emotion detection without requiring explicit prompts

- 50% computational efficiency gain vs traditional architectures

- Outperforms all comparable open-source speech models

- Supports complex function calling for task automation

- Self-hosting option preserves data privacy

- Limited to English and Chinese languages currently

- Requires expensive GPU hardware (24GB VRAM minimum)

- Emotion accuracy degrades with poor audio quality

- Smaller model size (8B) limits reasoning depth vs larger LLMs

- Documentation still maturing compared to established alternatives

Qwen-Image-Edit-2511

- Significant consistency improvements over previous version

- Integrated LoRA support eliminates fine-tuning steps

- Excellent geometric reasoning for technical drawings

- Supports variable aspect ratios and resolutions

- Available in multiple formats (standard, Lightning, GGUF, FP8)

- Free access via Qwen Chat web interface

- Extreme edits still produce occasional artifacts

- Multi-person consistency degrades beyond 4 individuals

- Processing time increases significantly at high resolutions

- LoRA library coverage incomplete

- Requires substantial VRAM for local deployment (16GB+)

VoiceDesign-VD-Flash

- Natural language voice control without preset templates

- Can create entirely new synthetic voices

- Outperforms GPT-4o-mini-tts on role-play benchmarks

- Fine-grained control over emotion, pace, and character

- Practical for real-time or near-real-time applications

- Ethical voice creation without cloning real people

- Complex emotional nuances (sarcasm) remain challenging

- Voice quality varies based on instruction specificity

- Limited multilingual documentation available

- Accent replication less accurate than native generation

- Not yet widely tested in production environments

Technical Specs Section

Fun-Audio-Chat-8B Architecture Details

Speech Processing:

- Dual-Resolution Representations: 5Hz backbone + 25Hz head

- Audio Encoder: Processes raw waveforms or pre-encoded features

- Semantic Processing: Integrated text LLM (Core-Cocktail trained)

- Output Synthesis: 25Hz high-quality speech generation via Fun-CosyVoice3-0.5B-2512

Training Methodology:

- Multi-stage training: Pre-training → Supervised Fine-Tuning → RLHF-style alignment

- Core-Cocktail strategy prevents catastrophic forgetting of text capabilities

- Multi-task post-training for semantic accuracy + emotional appropriateness

- Data sources: Conversation datasets, emotional speech corpora, function-calling examples

Inference Performance:

- Latency: ~200-400ms first-token response (hardware dependent)

- Throughput: Supports real-time audio streaming

- Batch Processing: Configurable for multiple concurrent conversations

- Memory Footprint: 24GB VRAM (fp16), ~12GB (quantized int8)

Qwen-Image-Edit-2511 Technical Architecture

Model Foundation:

- Base: Vision-Language Model with diffusion backbone

- Context Window: Processes image + text prompt jointly

- Resolution Handling: Native 1024px with aspect ratio preservation

- LoRA Integration: Pre-loaded community LoRAs activated via prompts

Editing Capabilities:

- Object Addition/Removal: Seamless inpainting with context awareness

- Style Transfer: Multiple artistic styles without separate models

- Character Consistency: Identity preservation across multiple edits

- Geometric Operations: Rotation, scaling, perspective correction with auxiliary lines

Deployment Options:

- Web Interface: qwen.ai chat with Image Editing feature selected

- HuggingFace: Direct model download for local inference

- ComfyUI: Community nodes for workflow integration

- API: Programmatic access via Alibaba Cloud (availability varies by region)

VoiceDesign-VD-Flash Specifications

Control Dimensions:

- Timbre Control: Vocal quality, resonance, breathiness

- Prosody Management: Pitch contour, rhythm, stress patterns

- Emotional Expression: Joy, sadness, anger, fear, surprise, neutral

- Character Attributes: Age, gender, personality traits, social context

- Speaking Style: Formal/informal, pacing, emphasis distribution

Generation Modes:

- Zero-Shot Creation: Generate new voice from description only

- Voice Cloning: Replicate existing voice from sample (3-10 seconds typically)

- Hybrid: Modify cloned voice via natural language instructions

- Real-time Adaptation: Adjust synthesis parameters during generation

Performance Benchmarks:

- InstructTTS-Eval: Outperforms GPT-4o-mini-tts, Gemini-2.5-pro-tts

- Role-Play Evaluation: Superior character voice consistency

- Latency: Near-real-time synthesis (exact figures not disclosed)

- Quality: Comparable to commercial-grade TTS services

Limitations & Known Issues

Despite significant advances, these models have constraints worth noting:

Fun-Audio-Chat-8B:

- Currently supports only English and Chinese; multilingual expansion planned

- Requires substantial GPU resources (24GB VRAM minimum) limiting accessibility

- Emotion detection accuracy decreases with poor audio quality or heavy background noise

- Function calling reliability depends on clear, structured commands

Qwen-Image-Edit-2511:

- Extreme edits (major pose changes, full background replacements) sometimes produce artifacts

- Multi-person consistency works best with 2-4 individuals; larger groups show degraded quality

- LoRA integration, while improved, doesn’t cover all community-developed LoRAs

- Processing time increases significantly with higher resolutions

VoiceDesign-VD-Flash:

- Complex emotional nuances (sarcasm, subtle irony) remain challenging

- Custom voice generation quality varies based on instruction specificity

- Limited documentation for advanced control parameters

- Real-world accent replication less accurate than native accent generation

Testing Methodology Note: AdwaitX tested Fun-Audio-Chat-8B on local hardware (NVIDIA A100 40GB), Qwen-Image-Edit-2511 via both Qwen Chat web interface and ComfyUI local deployment, and reviewed VoiceDesign-VD-Flash documentation and benchmark reports as of December 31, 2025.

What This Means for the AI Industry

Alibaba’s December 2025 releases signal a strategic shift in the AI landscape. While Western labs like OpenAI and Anthropic space major releases months apart, Chinese companies are deploying a rapid-iteration strategy.

The company launched six Qwen3 model variants in September 2025 alone, including the 1-trillion-parameter Qwen3-Max, demonstrating production capacity that rivals or exceeds Silicon Valley counterparts.

Open-Source Strategy Implications:

Releasing powerful models under permissive licenses (Apache 2.0) accelerates adoption while building ecosystem lock-in. Developers who build applications on Qwen infrastructure become invested in the platform’s continued development, creating network effects that benefit Alibaba’s cloud services business.

This mirrors Meta’s strategy with Llama, but Alibaba’s multimodal focus (text, vision, speech) across the entire model family creates more comprehensive platform coverage.

Competitive Pressure:

OpenAI and Google now face open-source alternatives matching 80-90% of their proprietary models’ capabilities at zero marginal cost. This forces either aggressive pricing cuts or differentiation through features impossible to replicate in open models, likely driving innovation in areas requiring massive compute or proprietary datasets.

Developer Empowerment:

The technical innovations particularly Fun-Audio-Chat-8B’s 50% efficiency gain and Core-Cocktail training will likely be adopted by other open-source projects, raising the baseline quality of community-developed models.

Frequently Asked Questions (FAQs)

Is Fun-Audio-Chat-8B completely free to use commercially?

Yes, Fun-Audio-Chat-8B is released under Apache 2.0 license, which permits commercial use, modification, and distribution without royalties. Companies can deploy it in production applications, modify the architecture, or create derivative works without licensing fees.

How does Fun-Audio-Chat-8B compare to Whisper for speech recognition?

Whisper is a speech-to-text transcription model, while Fun-Audio-Chat-8B is a speech-to-speech conversational model. Whisper converts audio to text; Fun-Audio-Chat-8B understands audio and responds with audio directly, preserving emotional context that text transcription loses. They serve different use cases Whisper for transcription, Fun-Audio-Chat-8B for interactive voice applications.

Can I run Qwen-Image-Edit-2511 on consumer hardware?

Yes, with limitations. The standard model requires approximately 16GB VRAM (RTX 4090, RTX 3090 Ti tier). Quantized versions (GGUF, FP8) reduce requirements to 12GB with minimal quality loss. Cloud services like Qwen Chat provide web access without local hardware requirements.

What languages does VoiceDesign-VD-Flash support?

Current documentation doesn’t specify comprehensive language support, but the Qwen3-TTS family historically prioritizes Chinese and English. Alibaba’s previous TTS models supported 10+ languages; VoiceDesign-VD-Flash likely follows similar coverage, though this requires verification from official sources.

Does emotion detection in Fun-Audio-Chat-8B work across different cultures?

Emotional expression varies culturally loudness, directness, and silence carry different meanings. Fun-Audio-Chat-8B trained primarily on English and Chinese data may misinterpret emotional cues from other linguistic/cultural contexts. Cross-cultural deployment should include testing with representative user populations to identify blind spots.

Can I fine-tune Fun-Audio-Chat-8B for domain-specific applications?

Yes, the model architecture supports fine-tuning, and Alibaba provides training scripts in the GitHub repository. However, effective fine-tuning requires substantial domain-specific audio data and compute resources (4×80GB GPUs minimum). For narrow use cases, prompt engineering and few-shot examples often provide adequate customization without full fine-tuning.

What’s the difference between VoiceDesign-VD-Flash and voice cloning models?

Voice cloning models replicate specific people’s voices from audio samples. VoiceDesign-VD-Flash generates new, synthetic voices from text descriptions without requiring existing voice samples. It can also clone voices if samples are provided, but its unique capability is creating entirely original vocal identities from scratch.

Are there ethical concerns with emotion-aware AI?

Yes, significant ethical considerations exist:

- Manipulation Potential: Systems that detect and respond to emotions could exploit vulnerable emotional states

- Privacy Implications: Emotional state constitutes sensitive personal information

- Consent Requirements: Users should know when emotional analysis occurs

- Bias Risks: Emotion detection models may perform differently across demographics

Responsible deployment requires transparency about emotional analysis capabilities, user consent mechanisms, and regular bias audits.

Featured Snippet Boxes

What is Fun-Audio-Chat-8B?

Fun-Audio-Chat-8B is Alibaba’s open-source speech-to-speech AI model with 8 billion parameters, released December 2025. It handles audio-to-audio conversations directly, understands emotions from speech patterns without labels, and reduces compute costs by 50% using dual-resolution architecture.

How accurate is Qwen-Image-Edit-2511?

Qwen-Image-Edit-2511 significantly reduces image drift and improves character consistency over its predecessor, particularly excelling in multi-person scenes with 2-4 individuals. It maintains identity features across complex edits better than previous versions, though extreme modifications may still produce artifacts.

Can VoiceDesign-VD-Flash create custom voices?

Yes, VoiceDesign-VD-Flash generates entirely new vocal identities from text descriptions alone, without requiring audio samples of real people. You describe desired voice characteristics (tone, age, accent, emotion) in natural language, and it creates a unique synthetic voice matching those specifications.

What GPU do I need for Fun-Audio-Chat-8B?

Fun-Audio-Chat-8B requires approximately 24GB VRAM for inference, which means NVIDIA RTX 3090/4090 (24GB), RTX A5000 (24GB), or enterprise GPUs like A100/H100. Training requires 4× 80GB GPUs (A100 or H100 tier).

Is Fun-Audio-Chat-8B better than GPT-4o Audio?

Fun-Audio-Chat-8B achieves comparable performance to GPT-4o Audio on benchmarks while outperforming all open-source alternatives in its parameter class. Key advantages: Apache 2.0 license, self-hosting capability, 50% compute efficiency, and built-in emotion detection. GPT-4o Audio offers broader language support and API convenience.

Where can I download Qwen models?

All Qwen models are available on HuggingFace (huggingface.co/Qwen), ModelScope (Alibaba’s platform), and GitHub (github.com/QwenLM). Qwen-Image-Edit-2511 and Fun-Audio-Chat-8B support both cloud deployment and local installation.