Quick Brief

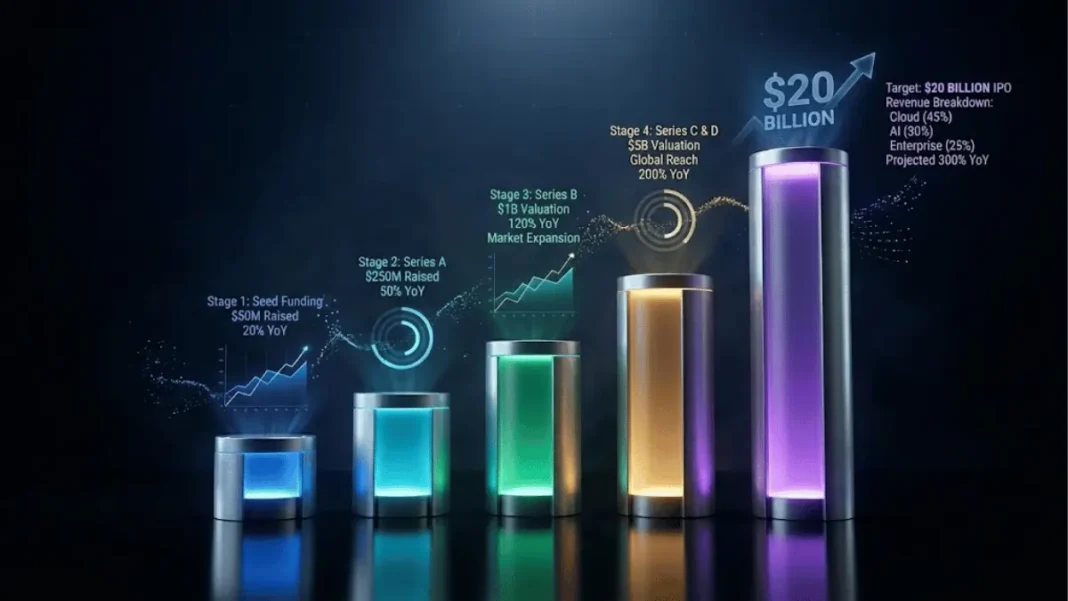

- The Growth: OpenAI achieved $20B+ annualized revenue run rate in 2025 10X growth from $2B in 2023 powered by compute expansion from 0.2 GW to 1.9 GW

- The Strategy: Company operates multi-tier monetization across subscriptions, APIs, advertising, and commerce targeting “hundreds of billions by 2030″

- The Infrastructure: OpenAI committed $1.4 trillion over eight years to data center capacity, including $300 billion with Oracle

- The Impact: 700 million weekly active users and 5 million business customers position OpenAI to compete in enterprise AI and digital advertising

OpenAI CFO Sarah Friar announced on January 17, 2026, that the company achieved over $20 billion in annualized revenue for 2025, representing 10X growth from $2 billion ARR in 2023. The announcement detailed how OpenAI’s business model “scales with the value of intelligence” through diversified revenue streams spanning consumer subscriptions, enterprise platforms, developer APIs, advertising, and commerce integrations.

The Compute-Revenue Flywheel

OpenAI’s revenue trajectory directly tracks compute capacity expansion. Compute grew 3X year-over-year from 0.2 gigawatts in 2023 to 0.6 GW in 2024 and approximately 1.9 GW in 2025. Revenue followed the same curve, scaling from $2 billion ARR in 2023 to $6 billion in 2024 and exceeding $20 billion in 2025.

This represents “never-before-seen growth at such scale,” according to Friar. The company’s revenue expansion demonstrates how infrastructure capacity enables AI adoption, creating what OpenAI describes as a flywheel: “Investment in compute powers leading-edge research and step-change gains in model capability. Stronger models unlock better products and broader adoption of the OpenAI platform. Adoption drives revenue, and revenue funds the next wave of compute and innovation”.

ChatGPT serves 700 million weekly active users, with both weekly active users (WAU) and daily active users (DAU) reaching all-time highs. The platform supports 5 million paying business users across Enterprise, Team, and Education subscription tiers.

AdwaitX Analysis: Infrastructure as Competitive Moat

OpenAI’s $1.4 trillion infrastructure commitment over eight years establishes compute certainty as a strategic advantage in AI markets where GPU access defines scaling capacity. The company transitioned from relying on a single compute provider three years ago to managing a diversified ecosystem of hardware partners.

The Oracle partnership alone represents $300 billion in cloud computing contracts among the largest enterprise technology deals in history. This diversification enables OpenAI to optimize inference costs to “cents per million tokens,” making AI economically viable for everyday workflows beyond elite use cases.

The scale economics shift AI from experimental technology to foundational infrastructure. OpenAI positions compute management as “an actively managed portfolio” where frontier models train on premium hardware when capability matters most, while high-volume workloads run on lower-cost infrastructure optimized for efficiency.

Revenue Architecture Across Five Verticals

OpenAI’s monetization strategy spans consumer subscriptions, workplace subscriptions with usage-based pricing, API platforms for developers and enterprises, commerce integrations, and advertising.

Consumer subscriptions include ChatGPT Plus at $20 monthly and ChatGPT Pro at $200 monthly. ChatGPT Go launched globally in 171 countries at $8 monthly, providing expanded access to messaging, image creation, file uploads, and memory.

The API and developer platform enables enterprises to embed intelligence through usage-based pricing that scales with production workloads. Spending grows “in direct proportion to outcomes delivered,” according to OpenAI’s business model framework.

Advertising represents the newest monetization layer. OpenAI plans to begin testing ads in the coming weeks for U.S. users on free and Go tiers, with sponsored products appearing beneath ChatGPT responses when relevant to user conversations. Ads will be “clearly labeled and separated from the organic answer,” with Pro, Business, and Enterprise subscriptions remaining ad-free.

Advertising Principles and Privacy Safeguards

OpenAI published five core principles governing its advertising approach: mission alignment with making AI accessible, answer independence ensuring ads never influence ChatGPT responses, conversation privacy protecting user data from advertisers, user choice and control over personalization, and long-term value prioritizing trust over time spent.

The company commits that “ads do not influence the answers ChatGPT gives you” and “we keep your conversations with ChatGPT private from advertisers, and we never sell your data to advertisers“. Users can disable personalization and clear ad-related data at any time.

Initial ad formats will not appear in accounts for users under 18 or near sensitive topics including health, mental health, or politics. CEO of Applications Fidji Simo stated that “the best ads are useful, entertaining, and help people discover new products and services”.

Valuation Trajectory and Capital Strategy

OpenAI completed a $500 billion valuation in October 2025 and is now in discussions to raise up to $100 billion at valuations between $750 billion and $830 billion. The potential valuation increase of 50-66% reflects investor confidence in OpenAI’s revenue scaling and infrastructure positioning.

The company manages capital deployment through structured commitments made years in advance, with “capital committed in tranches against real demand signals” to balance capacity expansion against actual usage growth. This approach allows OpenAI to “lean forward when growth is there without locking in more of the future than the market has earned”.

OpenAI’s balance sheet strategy prioritizes partnering over owning infrastructure, with flexibility structured across multiple providers and hardware types to manage timing gaps between capacity and usage.

Roadmap: Practical Adoption in 2026

OpenAI’s stated focus for 2026 is “practical adoption” that closes the gap between AI capabilities and real-world implementation across health, science, and enterprise sectors. The company is developing agents and workflow automation systems that “run continuously, carry context over time, and take action across tools”.

For individuals, this means AI that manages projects, coordinates plans, and executes tasks autonomously. For organizations, it becomes “an operating layer for knowledge work” that transforms business operations.

As intelligence moves into scientific research, drug discovery, energy systems, and financial modeling, OpenAI anticipates new economic models including licensing, IP-based agreements, and outcome-based pricing that “share in the value created“. The company projects this evolution will mirror how the internet developed commercial models over time.

Frequently Asked Questions (FAQs)

What is OpenAI’s current annual revenue?

OpenAI achieved over $20 billion in annualized revenue run rate for 2025, representing 10X growth from $2 billion in 2023.

How does OpenAI plan to monetize ChatGPT advertising?

OpenAI will test ads beneath ChatGPT responses for free and Go tier users, with sponsored products clearly labeled and separated from organic answers. Pro, Business, and Enterprise tiers remain ad-free.

What infrastructure commitments has OpenAI made?

OpenAI committed $1.4 trillion over eight years to data center capacity, including a $300 billion deal with Oracle for cloud computing.

How many users does ChatGPT currently serve?

ChatGPT serves 700 million weekly active users and 5 million paying business customers across Enterprise, Team, and Education tiers.