Essential Points

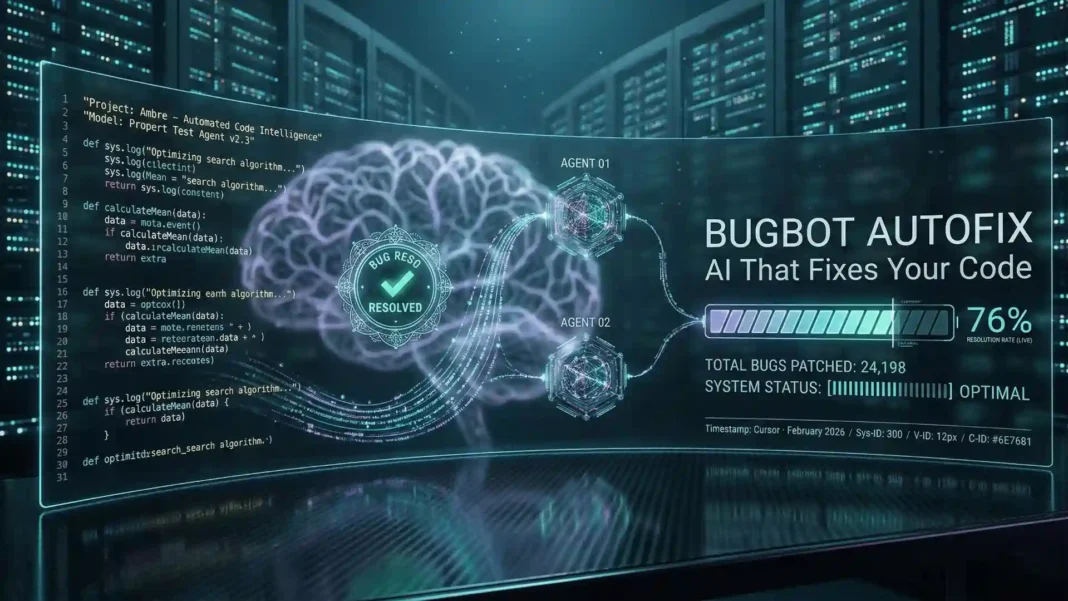

- Bugbot Autofix exited beta and is now available to all Bugbot users as of February 25, 2026

- Over 35% of Bugbot Autofix proposed changes are merged into the base pull request

- Bug resolution rate rose from 52% to 76% while issues identified per run nearly doubled in six months

- Bugbot reviews more than 2 million pull requests per month for customers including Rippling, Discord, Samsara, Airtable, and Sierra AI

Most AI code review tools tell you where bugs are. Cursor Bugbot Autofix goes further and proposes a tested fix before you ever touch the PR. Released to general availability on February 25, 2026, Autofix closes the loop between bug detection and resolution, and the merge data already validates the approach.

What Cursor Bugbot Autofix Actually Does

Bugbot has reviewed more than 2 million pull requests per month for customers including Rippling, Discord, Samsara, Airtable, and Sierra AI, and Cursor runs it on all internal code as well. The original Bugbot workflow identified bugs and posted PR comments, requiring developers to take manual action. Autofix changes that loop entirely.

When a PR is created, Autofix spawns cloud agents that operate in their own isolated virtual machines to test your software independently, then propose a fix directly on the PR. Once you enable Autofix from the Bugbot dashboard, every PR Bugbot reviews will include proposed fixes to give your team a jumpstart on code review.

This is not a linter or a static analyzer. It is a cloud agent that executes real tests against real code changes before presenting a resolution.

The Performance Numbers That Matter

Bug detection rate matters only if those detections translate into fixes that engineers actually merge. Bugbot’s resolution rate, the percentage of bugs resolved by users before the PR is merged, climbed from 52% to 76% over six months. At the same time, the average number of issues identified per run nearly doubled.

The Building Bugbot engineering post adds finer precision to those numbers. Average bugs flagged per run rose from 0.4 to 0.7, and resolved bugs per PR more than doubled from roughly 0.2 to about 0.5. This combination is significant because catching more bugs while reducing false positives is a tradeoff most review tools fail to solve simultaneously.

Over 35% of all Autofix-proposed changes are merged directly into the base PR. For engineering teams shipping at high velocity, that translates to code review cycles measured in minutes rather than hours.

How Cursor Built Bugbot to This Level

Cursor’s engineering team ran 40 major experiments to move Bugbot’s resolution rate from 52% to over 70% before Autofix launched. The most impactful early improvement was running multiple bug-finding passes in parallel with randomized diff ordering, then using majority voting to filter issues flagged only once.

The largest single quality jump came when Cursor switched Bugbot to a fully agentic architecture. The agent reasons over the diff, calls tools dynamically, and decides where to dig deeper rather than following a fixed pass sequence. This shift also changed Cursor’s prompting strategy: earlier versions restrained the model to minimize false positives, but the agentic approach required the opposite, pushing the agent to investigate every suspicious pattern aggressively.

Cursor also built Bugbot rules, which let teams encode codebase-specific invariants like unsafe migrations or incorrect internal API usage, without hardcoding those checks into the system.

The Resolution Rate Metric Explained

Teams often ask how to measure the actual impact Bugbot has on their codebase. Cursor built a metric called the resolution rate specifically to answer that question.

At PR merge time, an AI model determines which bugs flagged by Bugbot were actually resolved in the final code by the author. Cursor spot-checked every example internally with PR authors and found the LLM correctly classified nearly all cases as resolved or not. This metric is surfaced prominently in the Bugbot dashboard so teams can track quality improvements over time rather than relying on anecdotal feedback or emoji reactions on comments.

What Cursor Is Building Next

Bugbot Autofix is the first event-driven agent automation in Cursor’s product roadmap. A PR creation event now triggers a fully autonomous agent workflow without any human initiation. Cursor is working to extend this architecture so teams can configure custom workflow automations for processes beyond code review.

Three additional capabilities are in development. First, Bugbot will be able to run code to verify its own bug reports before posting them. Second, deep research mode will let Bugbot investigate complex multi-file issues more thoroughly. Third, an always-on version will continuously scan your codebase rather than waiting for PR events.

Limitations Worth Knowing

Bugbot Autofix proposes and tests fixes within the scope of a PR diff. The 35% merge rate means the majority of proposed fixes still require developer review before merging. Teams with complex multi-repo setups, heavy infrastructure-as-code, or deep cross-service dependency graphs may encounter cases where Bugbot’s fix proposals need significant human context to evaluate correctly.

Cursor acknowledges that new models will arrive regularly with different strengths and weaknesses, and that continued progress requires finding the right combination of models, harness design, and review structure.

Frequently Asked Questions (FAQs)

What is Cursor Bugbot Autofix?

Cursor Bugbot Autofix is a code review feature that spawns cloud agents in isolated virtual machines to test your software and automatically propose fixes for bugs found in pull requests. Once enabled from the Bugbot dashboard, every PR Bugbot reviews will include proposed fixes.

When did Bugbot Autofix become generally available?

Bugbot Autofix exited beta and became available to all Bugbot users on February 25, 2026. Cursor announced the general availability alongside updated performance metrics showing a 76% bug resolution rate across Bugbot’s user base.

What percentage of Autofix fixes get merged?

Over 35% of Bugbot Autofix proposed changes are merged directly into the base pull request. The remaining fixes still require developer review before merging, reflecting that Autofix proposes rather than forces code changes.

How does Cursor measure Bugbot’s effectiveness?

Cursor uses a metric called resolution rate, which measures the percentage of bugs flagged by Bugbot that are actually resolved by the author before the PR merges. An AI model classifies each case at merge time, and Cursor found it correctly classified nearly all examples when verified internally with PR authors.

Who is currently using Bugbot at scale?

Bugbot reviews more than 2 million pull requests per month for customers including Rippling, Discord, Samsara, Airtable, and Sierra AI. Cursor also runs Bugbot on all of its own internal code as a required pre-merge step.

What is Cursor planning to add to Bugbot next?

Cursor is developing three major capabilities: Bugbot verifying its own findings by running code before posting, deep research mode for complex issues, and an always-on version that continuously scans your codebase without requiring a PR event to trigger it.

How does Bugbot’s agentic architecture differ from earlier versions?

Early Bugbot ran fixed sequences of parallel passes with majority voting. The current agentic version reasons over the diff, calls tools dynamically, and decides where to investigate further at runtime. This shift produced the largest single quality gain in Bugbot’s development history.