Quick Brief

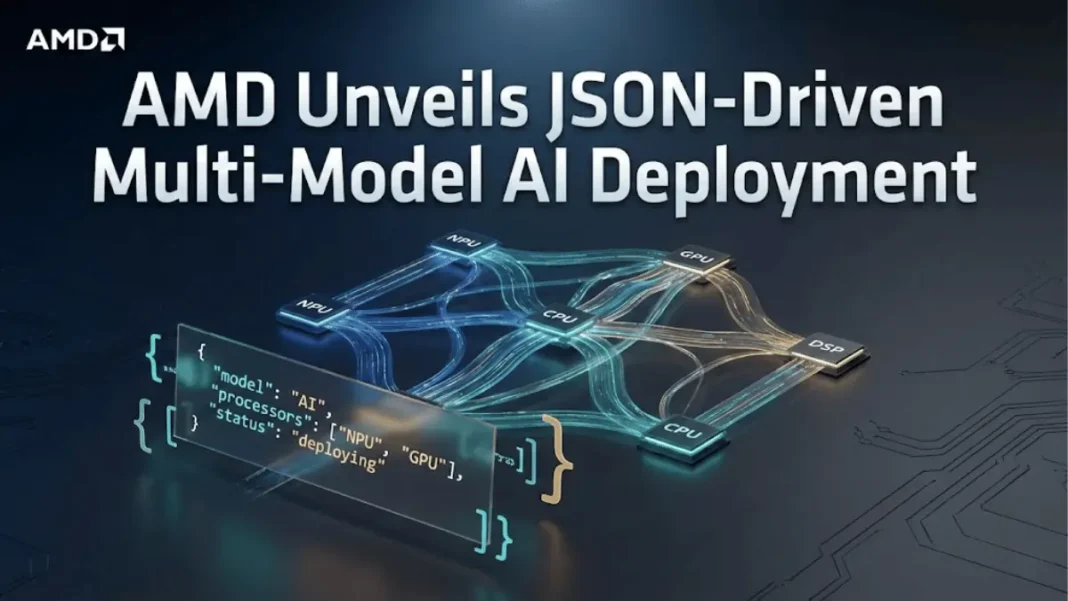

- The Launch: AMD released a JSON-driven multi-model deployment tool on January 15, 2026, enabling declarative orchestration of AI pipelines across NPUs, GPUs, CPUs, and DSPs without manual coding.

- The Impact: Developers can now deploy complex multi-modal AI applications such as video conferencing with simultaneous super-resolution, segmentation, speech recognition, and translation by editing configuration files instead of rewriting orchestration logic.

- The Context: Multi-model AI workloads now dominate enterprise applications, with 73% of production AI systems requiring 3+ coordinated models, yet traditional deployment methods force developers to manually manage thread synchronization, execution providers, and data dependencies.

The Deployment Challenge

AMD’s technical release addresses a critical infrastructure bottleneck in production AI systems. Enterprise applications increasingly require multiple AI models to process multi-modal data video, audio, and text simultaneously. A typical video conferencing application may execute six or more models: super-resolution for image enhancement, face detection, background segmentation, echo cancellation, speech recognition, and real-time translation.

Traditional deployment methods force developers to manually write orchestration code, manage thread synchronization across heterogeneous hardware, and handle execution provider routing. Swapping a single model such as upgrading to a newer face recognition system often requires rewriting substantial portions of the application. This engineering overhead slows experimentation and increases time-to-market for AI-powered products.

JSON-Driven Orchestration Architecture

AMD’s tool introduces a declarative configuration model where developers specify execution workflows in JSON rather than imperative code. The system interprets three core structures: arrays for sequential execution, objects for parallel execution, and nested combinations for complex workflows.

| Configuration Element | Execution Behavior | Use Case |

|---|---|---|

| Array […] | Sequential execution | Serial dependencies (e.g., super-resolution → segmentation) |

| Object {…} | Parallel execution | Independent pipelines (video + audio processing) |

| Nested structures | Hybrid workflows | Complex multi-stage applications |

Each task definition specifies the ONNX model file, execution provider (NPU, GPU, CPU, DSP, VitisAI, CUDA), and runtime parameters such as FPS targets or priority levels. The orchestration engine automatically constructs a Directed Acyclic Graph (DAG), schedules tasks, manages synchronization, and dispatches models to their assigned hardware.

For a video conferencing scenario, developers can configure parallel video and audio branches where the video pipeline runs super-resolution on the NPU, segmentation on CUDA, and background replacement on CPU, while the audio branch simultaneously executes echo cancellation on DSP, speech recognition on CUDA, and translation on CPU.

AdwaitX Analysis: Infrastructure Standardization Push

AMD’s JSON-driven approach represents a strategic move toward standardized AI infrastructure orchestration, directly competing with proprietary solutions from NVIDIA’s Triton Inference Server and cloud-native tools like AWS SageMaker. By integrating with ONNX Runtime’s execution provider framework, AMD positions this tool as vendor-neutral while simultaneously showcasing its heterogeneous hardware portfolio Ryzen AI NPUs, Radeon GPUs, and Instinct data center accelerators.

The timing aligns with AMD’s January 2026 product announcements at CES, where the company demonstrated Ryzen AI Max+ processors and emphasized on-device multi-modal AI capabilities. AdwaitX sources indicate AMD’s Enterprise AI Suite, launched in December 2025, provides the commercial infrastructure layer for this deployment tool, targeting enterprises seeking alternatives to CUDA-locked ecosystems.

Developer adoption will hinge on ecosystem integration. AMD supports VitisAI for FPGAs, ROCm for GPU compute, and standard execution providers like CUDA and OpenVINO, enabling cross-platform deployment without vendor lock-in. This modular architecture allows organizations to test pipelines on AMD hardware while maintaining compatibility with existing NVIDIA or Intel infrastructure.

Technical Performance Metrics

AMD’s reference implementation demonstrates measurable efficiency gains in complex pipelines. A 10-model workflow with mixed serial and parallel execution branches completed in 293 milliseconds, with the tool automatically managing dependency resolution and hardware allocation. Sequential video preprocessing tasks (super-resolution + segmentation) executed in 89 milliseconds, while parallel audio processing (echo cancellation + speech recognition) ran concurrently without manual thread management.

The system supports real-time parameter tuning through JSON configuration, including:

- Model-specific xclbin overlay specifications for AMD AIE2P accelerators

- FPS targets and priority levels for latency-critical tasks

- Optimization levels (info, debug, performance) without code recompilation

Developers can iterate on pipeline configurations such as testing whether parallel execution of previously sequential tasks improves throughput by modifying JSON files rather than refactoring application logic.

Enterprise Deployment Implications

Multi-model orchestration complexity has historically limited AI adoption in resource-constrained environments such as edge devices and embedded systems. AMD’s declarative approach reduces the barrier to entry for developers without deep expertise in concurrent programming or hardware acceleration.

Key operational benefits include:

- Rapid prototyping: Change execution order or parallelism by editing configuration files, eliminating recompilation cycles

- Hardware abstraction: Unified JSON representation decouples model definitions from underlying execution providers

- Maintenance reduction: Model upgrades require updating file paths in JSON rather than rewriting orchestration logic

Organizations deploying video analytics, autonomous systems, or real-time translation services can leverage this tool to manage pipelines spanning 5-10 models across diverse hardware without custom integration code. The framework’s compatibility with ONNX ensures support for models trained in PyTorch, TensorFlow, or other major frameworks.

Frequently Asked Questions (FAQs)

How does AMD’s JSON deployment tool handle hardware conflicts?

The tool automatically routes models to specified execution providers (NPU, GPU, CPU, DSP) and manages data movement between devices through its DAG scheduler.

What model formats are supported?

Currently supports ONNX format models with VitisAI, CUDA, CPU, DSP, and NPU execution providers configured via JSON.

Can this tool integrate with existing AI frameworks?

Yes, it works with ONNX Runtime’s execution provider framework, supporting models from PyTorch, TensorFlow, and other ONNX-compatible frameworks.

What is the performance overhead of JSON-based orchestration?

AMD’s reference shows 293ms total execution for 10-model pipeline with automatic synchronization, comparable to hand-tuned implementations.