Quick Brief

- The Framework: AMD released a reproducible evaluation method for punctuation restoration models in ASR systems using Sherpa-ONNX on January 14, 2026, targeting Ryzen AI deployment.

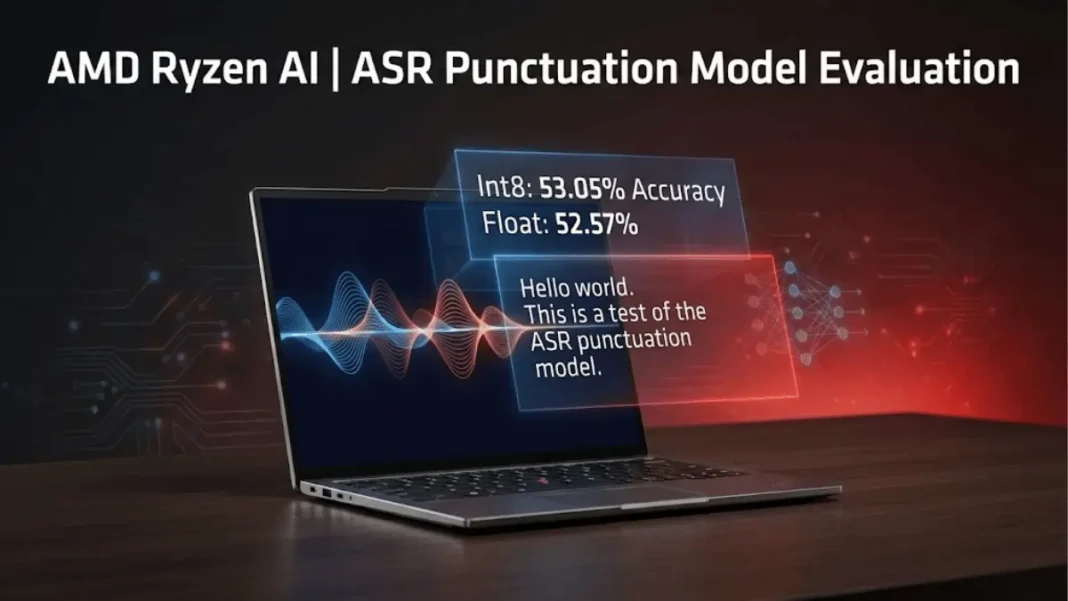

- The Performance: Int8 quantized models achieve 53.05% accuracy versus 52.57% for float models while reducing memory usage from 700MB to 260MB and loading time from 400ms to 140ms.

- The Impact: Developers building voice-driven applications can now select optimized punctuation models that improve LLM prompt quality while maintaining efficiency on AMD hardware.

- The Context: ASR models like Zipformer produce unpunctuated text streams, degrading LLM performance since language models train on properly formatted text corpora.

AMD published a technical framework for evaluating punctuation model accuracy in Automatic Speech Recognition (ASR) systems, addressing a critical gap in speech-to-text deployment for LLM-driven applications. The semiconductor manufacturer introduced a character-level comparison methodology using the Sherpa-ONNX inference framework specifically optimized for Ryzen AI platforms, providing developers with reproducible benchmarks for model selection.

The ASR-LLM Integration Challenge

Modern voice applications including virtual assistants and real-time transcription increasingly rely on large language models for downstream processing. ASR engines such as Zipformer output continuous word streams without punctuation, creating readability issues for human users and degrading LLM performance. Language models train on text corpora containing proper sentence boundaries and punctuation marks, making unpunctuated ASR output unsuitable as prompts.

Punctuation restoration models insert appropriate marks into raw ASR transcripts, but the industry lacks standardized evaluation protocols. AMD’s methodology addresses this by implementing dynamic programming algorithms that count character-level differences between model output and ground-truth text.

Benchmark Results: Int8 Quantization Advantage

AMD tested two punctuation models from the K2-FSA repository using the VocalNo dataset containing 879 sentences. The evaluation revealed performance metrics favoring quantized architectures for production deployment.

| Model Type | Accuracy (879 sentences) | Load Time | Memory Footprint |

|---|---|---|---|

| Float32 | 52.57% | 400ms | 700MB |

| Int8 | 53.05% | 140ms | 260MB |

The int8 model demonstrated 0.48 percentage point accuracy improvement while achieving 65% faster initialization and 63% memory reduction. On a secondary 100-sentence test set, the quantized model maintained superior performance at 47.93% versus 46.45% for the float variant.

AdwaitX Analysis: Deployment Strategy for Edge AI

The performance differential positions int8 quantization as the optimal choice for Ryzen AI-powered applications where memory bandwidth and latency directly impact user experience. AMD’s recent Ryzen AI Max+ processors announced at CES 2026 feature 60 TOPS neural processing units with full ROCm software support, providing hardware acceleration for these models. The 128GB unified memory architecture in Max+ variants enables developers to run punctuation pipelines alongside large language models without cloud dependencies.

The evaluation framework utilizes standard C++ APIs from Sherpa-ONNX, allowing integration into existing Windows-based development workflows using Visual Studio 2022 toolchains. Developers access pre-trained models through the K2-FSA GitHub repository, which maintains ONNX format implementations for cross-platform deployment.

Technical Implementation Architecture

AMD’s methodology requires three file components: ground-truth text with proper punctuation (f_golden.txt), unpunctuated input (f_input.txt), and model-generated output (f_output.txt). The evaluation script implements minimum edit distance algorithms to calculate total character mismatches, accounting for insertions, deletions, and substitutions.

The test harness compiles against sherpa-onnx-core.lib and requires ONNX Runtime DLL dependencies. Model configuration accepts parameters for thread count, debug mode, and execution provider specification, with CPU inference recommended for baseline measurements.

Roadmap for ASR Pipeline Optimization

AMD positions this evaluation framework as foundational for developers targeting the Ryzen AI Halo Developer Platform launching in Q2 2026. The desktop development kit promises “leadership tokens-per-second-per-dollar” for AI workloads, suggesting optimized pricing for punctuation restoration in commercial transcription services.

Future iterations may incorporate GPU acceleration through AMD’s XDNA architecture-based NPUs, which combine Zen 5 cores with Radeon 8060S graphics for parallel processing. The reproducible testing methodology enables developers to benchmark custom-trained punctuation models against AMD’s baseline results using domain-specific datasets.

Frequently Asked Questions (FAQs)

How does AMD’s punctuation model evaluation method work?

AMD uses dynamic programming to compare model output with ground-truth text character-by-character, counting mismatches including insertions and deletions across sentence datasets.

What accuracy do punctuation models achieve on AMD platforms?

Int8 quantized models reach 53.05% accuracy on 879-sentence tests while float models achieve 52.57%, both tested using the VocalNo dataset.

Why do ASR systems require punctuation restoration?

ASR engines output unpunctuated text streams that degrade LLM performance and human readability, since language models train on properly formatted corpora.

Which punctuation model performs better on Ryzen AI?

Int8 quantized models outperform float variants with 0.48% higher accuracy, 65% faster loading at 140ms, and 63% lower memory usage at 260MB.