Quick Brief

- The Release: OpenAI published a technical deep-dive on January 23, 2026, detailing the agent loop architecture powering Codex CLI, its open-source software development agent launched in April 2025.

- The Impact: Developers, enterprise engineering teams, and AI infrastructure providers gain insights into how OpenAI constructs agentic systems using the Responses API, prompt caching, and stateless request handling.

- The Context: Following partnerships with Cisco (January 19) and JetBrains (January 22), OpenAI positions Codex as enterprise-ready infrastructure for autonomous software engineering, competing directly with GitHub Copilot and Cursor.

- Technical Significance: The architecture achieves linear-time model sampling through prompt caching and supports Zero Data Retention configurations for regulated industries.

OpenAI engineer Michael Bolin released the first installment of a technical series explaining how Codex CLI executes its core agent loop, the orchestration layer between users, large language models, and execution tools. The post reveals architectural decisions around the Responses API, context window management, and performance optimization techniques that enable Codex to operate as a local software agent across Windows, macOS, and Linux.

Architecture of the Codex Agent Loop

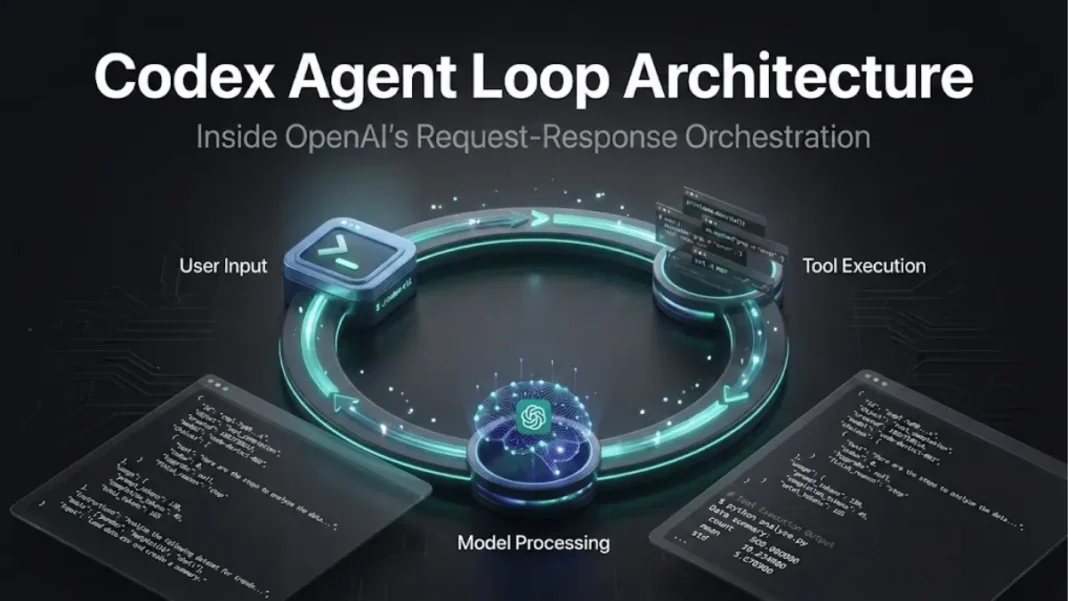

The agent loop operates as a request-response cycle where Codex CLI sends HTTP requests to OpenAI’s Responses API endpoints. Each cycle begins when a user submits input, which Codex transforms into a structured prompt containing system instructions, available tools, and contextual metadata about the local development environment.

The Responses API processes this prompt by tokenizing text into integer sequences, sampling the model to generate output tokens, and streaming results back to Codex via Server-Sent Events (SSE). The model either produces a final assistant message or requests a tool call such as executing a shell command or reading a file. When tool calls occur, Codex executes them locally, appends the results to the original prompt, and resubmits the expanded context to the API.

This loop continues until the model stops emitting tool calls and returns an assistant message, signaling task completion. A single conversation turn can include dozens of inference-tool cycles before control returns to the user. The architecture maintains conversation history by appending each turn’s messages and tool calls to subsequent prompts, causing prompt length to grow linearly with conversation depth.

Responses API Request Structure

Codex CLI constructs JSON payloads with three critical parameters for the Responses API. The instructions field contains system-level directives read from ~/.codex/config.toml or model-specific base instructions bundled with the CLI, such as gpt-5.2-codex_prompt.md. The tools field enumerates functions the model can invoke, including Codex-provided utilities, Responses API native tools, and user-defined tools from Model Context Protocol (MCP) servers.

The input field assembles a prioritized list of messages with assigned roles system, developer, user, and assistant in descending order of weight. Codex inserts a developer-role message describing sandbox permissions for the CLI’s built-in shell tool, optional developer instructions from user configuration, and aggregated user instructions from AGENTS.md files located in project directories. A final user-role message specifies the current working directory and shell environment before appending the user’s actual query.

The Responses API server reorders these components into a final prompt structure: system message (server-controlled), tool definitions, instructions (client-controlled), and the input list. This separation ensures OpenAI can inject model-specific preambles while clients retain control over task-specific context.

Prompt Caching Performance Gains

OpenAI’s prompt caching mechanism enables Codex to achieve linear-time model sampling despite quadratic growth in request payload size. Cache hits occur when the new prompt contains an exact prefix match with a previous inference call, allowing the API to reuse intermediate computations rather than reprocessing the entire context.

Codex maximizes cache hit rates by placing static content instructions, tool definitions, and sandbox configurations at the beginning of prompts, with variable user messages appended at the end. Operations that modify early prompt segments trigger expensive cache misses. Changing the tools list mid-conversation, switching models, or altering sandbox permissions all invalidate cached prefixes.

An early bug in MCP tool integration caused Codex to enumerate tools in non-deterministic order, producing cache misses on every request. The team resolved this by enforcing consistent tool ordering, but MCP servers remain a caching challenge because they can dynamically update available tools via notifications/tools/list_changed events during long conversations. When configuration changes occur mid-turn, Codex appends new developer or user messages to reflect updates rather than modifying earlier prompt segments, preserving cache validity.

Amazon Bedrock reports up to 85% faster response times for cached content on supported models, with prompt caching reducing costs by avoiding redundant computation. OpenAI’s implementation applies similar principles to maintain sub-second response latency in Codex CLI during multi-turn conversations.

Context Window Management and Compaction

Every large language model enforces a context window, the maximum token count for combined input and output in a single inference call. Codex addresses window exhaustion through automatic conversation compaction when token usage exceeds configured thresholds. Early implementations required users to manually invoke a /compact command, which queried the Responses API with summarization instructions and replaced the conversation history with a condensed assistant message.

The current architecture uses a dedicated /responses/compact endpoint that returns a list of items suitable for replacing the original input while preserving conversational coherence. This list includes a special type=compaction item with encrypted content that maintains the model’s latent understanding of prior exchanges without storing full message history. Codex triggers compaction automatically when the auto_compact_limit configuration value is exceeded, occurring transparently without user intervention.

This approach supports Zero Data Retention customers who prohibit server-side data persistence. OpenAI stores ZDR customers’ decryption keys but not their conversation data, allowing encrypted reasoning messages from previous turns to be decrypted server-side during inference while maintaining compliance with data retention policies. The stateless request model where every API call includes full conversation context rather than referencing a stored session ID simplifies ZDR implementation but increases network transfer volumes.

Enterprise Adoption and Competitive Position

Cisco announced integration of Codex into enterprise engineering workflows on January 19, 2026, positioning the agent as embedded infrastructure for software development teams. JetBrains followed on January 22 by natively integrating Codex into IntelliJ, PyCharm, and other IDEs via version 2025.3, offering the agent free for a limited period through JetBrains AI chat. Developers can adjust Codex’s autonomy level from simple question-response mode to full network access and autonomous command execution, with model and reasoning budget selection available directly in the IDE.

The competitive landscape includes GitHub Copilot, positioned as an AI assistant for developers writing code themselves; Cursor, framed as an AI teammate for collaborative development; and Windsurf, marketed as a full agentic team. Copilot integrates natively into mainstream IDEs with subtle AI-powered code completion.

OpenAI’s December 17, 2025 release of GPT-5.2-Codex introduced the company’s most advanced agentic coding model for professional software engineering and defensive cybersecurity. The model underpins Codex CLI, Codex Cloud, and the Codex VS Code extension.

Open-Source Repository and Future Development

OpenAI maintains Codex CLI’s implementation in a public GitHub repository at https://github.com/openai/codex, where design decisions are documented in issues and pull requests. The codebase includes templates for sandbox permissions (workspace_write.md), request handling (on_request.md), and model-specific instructions that ship with each CLI release.

Upcoming posts in the technical series will examine the CLI’s architecture, tool implementation details, and sandboxing model. OpenAI plans to introduce more interactive agent workflows, enabling developers to provide mid-task guidance, collaborate on implementation strategies, and receive proactive progress updates. Future integrations will extend beyond GitHub to include issue trackers and continuous integration systems, allowing task assignment from Codex CLI, ChatGPT Desktop, or external development tools.

Frequently Asked Questions (FAQs)

How does OpenAI Codex CLI execute code changes?

Codex CLI operates locally on developer machines, using a sandboxed shell tool to run commands, read files, and write code based on model-generated tool calls processed through the agent loop.

What is the Responses API?

The Responses API is OpenAI’s inference endpoint that accepts structured JSON payloads containing instructions, tools, and input, returning streaming events with model outputs and tool call requests.

Does Codex work with non-OpenAI models?

Yes. Codex CLI supports any Responses API-compatible endpoint, including local inference via Ollama or LM Studio with the –oss flag for open-source models.

How does prompt caching reduce Codex costs?

Caching stores computation from previous inference calls, enabling reuse when new prompts share exact prefixes with cached requests, reducing processing time by up to 85%.

What makes Codex different from GitHub Copilot?

Copilot provides inline code suggestions as developers type, while Codex operates as an autonomous agent that plans tasks and executes multi-step workflows with tool calls.