Quick Brief

- The Launch: Google announced Agentic Vision on January 27, 2026, enabling Gemini 3 Flash to perform iterative visual reasoning through code execution rather than single-pass image analysis.

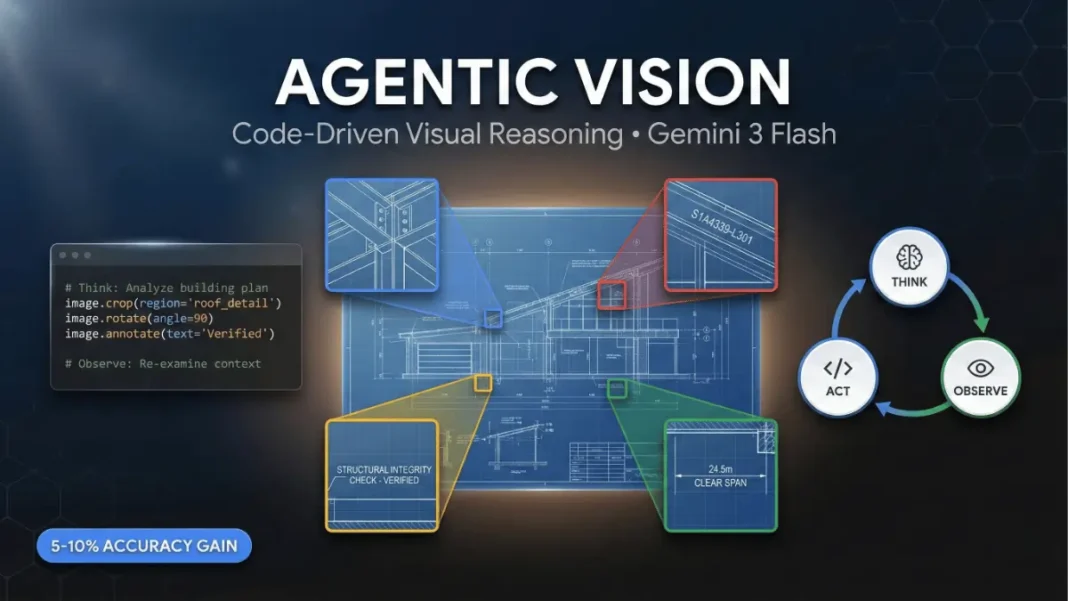

- The Technology: Agentic Vision uses a Think-Act-Observe loop where the model generates and executes Python code to manipulate images cropping, rotating, annotating, and performing calculations.

- The Access: Available immediately via Gemini API, Google AI Studio, Vertex AI, and rolling out in the Gemini app’s Thinking model.

- The Performance: Google reports enabling code execution with Gemini 3 Flash delivers a consistent 5-10% quality boost across most vision benchmarks.

Google introduced Agentic Vision for Gemini 3 Flash on January 27, 2026, launching a code execution framework that transforms how the model processes visual information. The capability addresses a fundamental challenge in AI vision systems: when fine details like serial numbers, distant text, or microscopic components are overlooked during initial image analysis, models must fill in gaps with educated guesses, increasing hallucination risk.

The Think-Act-Observe Execution Architecture

Agentic Vision operates through a three-stage iterative loop that treats image understanding as an investigative process. In the Think phase, Gemini 3 Flash analyzes the user’s query alongside the initial image to formulate a multi-step plan for extracting visual information. During the Act phase, the model generates and executes Python code to manipulate images cropping specific regions, rotating perspectives, drawing annotations, counting objects, or running mathematical calculations. The Observe stage appends the transformed images back into the model’s context window, allowing re-examination with enhanced context before generating the final response.

When the model needs to inspect fine-grained visual details, Agentic Vision automatically engages code execution to ground its answer in pixel-level inspection. This code-driven approach replaces probabilistic outputs with verifiable, executable logic.

Performance Validation and Real-World Applications

Google reports that enabling code execution with Gemini 3 Flash delivers a consistent 5-10% quality boost across most vision benchmarks. PlanCheckSolver.com, a platform that uses AI to validate building plans against local building codes, implemented code execution and improved accuracy by 5%. The system uses Gemini 3 Flash to iteratively crop and analyze sections of high-resolution building plans, appending each cropped image back into the model’s context to verify details like roof edges and structural components against compliance requirements.

In the Gemini app demonstration, when asked to count fingers on a hand, the model autonomously generated Python code to draw bounding boxes and numeric labels over each detected finger, creating an annotated image that serves as a visual reference for the model’s analysis.

Developer Access and Implementation

| Feature | Details |

|---|---|

| Availability | Gemini API, Google AI Studio, Vertex AI |

| Gemini App Access | Rolling out with Thinking model enabled |

| Programming Language | Python code execution |

| Capabilities | Crop, rotate, annotate, calculate on images |

| Performance Gain | 5-10% quality boost across vision benchmarks |

| Activation Method | Enable Code Execution in Tools section (AI Studio) |

Developers can access Agentic Vision by enabling the Code Execution tool in the Tools section of Google AI Studio’s Playground or through API configuration. The feature is rolling out in the Gemini app under the Thinking model option.

AdwaitX Analysis: Code-Grounded Visual Intelligence

The introduction of Agentic Vision represents a strategic architectural shift from probabilistic vision systems to deterministic, code-verified image processing. By embedding Python execution directly into the inference loop, Google addresses the hallucination problem that has constrained multimodal AI reliability in enterprise applications. This approach aligns with regulatory and compliance requirements for auditable AI outputs, particularly in construction validation, quality control manufacturing, and document processing workflows.

Gemini 3 Flash’s pricing at $0.50 per million input tokens and $3.00 per million output tokens positions it competitively for high-frequency visual reasoning tasks. The 5-10% performance improvement becomes operationally significant in applications processing millions of images monthly where accuracy directly impacts compliance costs and operational efficiency.

Future Development Roadmap

Google outlined three expansion phases for Agentic Vision. Currently, Gemini 3 Flash automatically performs implicit zooming when fine-grained details are required, but other actions like image rotation and visual mathematics still require explicit prompting. Future updates will make these behaviors fully implicit, eliminating the need for developers to manually specify code execution strategies.

The second phase introduces additional tools including web search and reverse image search to ground visual understanding in external knowledge bases. The third phase extends Agentic Vision beyond Gemini 3 Flash to other model sizes in the Gemini family.

Frequently Asked Questions (FAQs)

What is Agentic Vision in Gemini 3 Flash?

Agentic Vision is a code execution framework enabling Gemini 3 Flash to iteratively analyze images through a Think-Act-Observe loop using Python, replacing static single-pass analysis.

How much does Agentic Vision improve accuracy?

Google reports 5-10% quality gains across vision benchmarks; PlanCheckSolver.com documented 5% accuracy improvement in building plan validation using code execution.

When is Agentic Vision available?

Available immediately since January 27, 2026, via Gemini API, Google AI Studio, Vertex AI, and rolling out in Gemini app’s Thinking model.

What programming language does Agentic Vision use?

Agentic Vision exclusively executes Python code for image manipulation, annotation, cropping, rotation, and mathematical calculations on visual data.

How do developers enable Agentic Vision?

Enable the Code Execution tool in Google AI Studio’s Playground Tools section or configure code execution parameters in API calls.