At a Glance

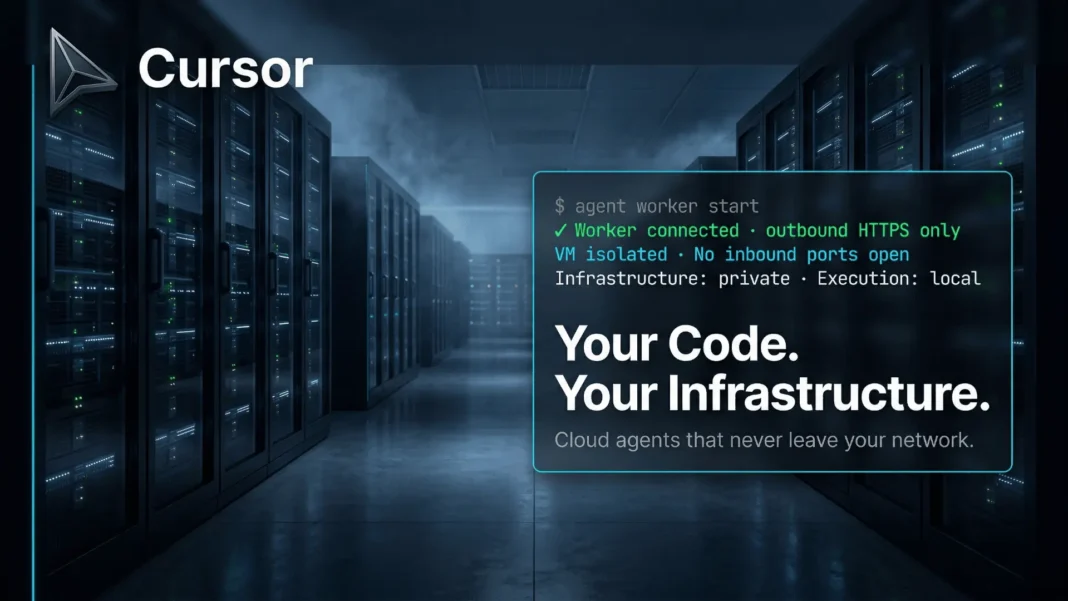

- Cursor launched self-hosted cloud agents to general availability on March 25, 2026, keeping all code execution inside your own network

- Each agent session runs in a dedicated isolated VM with terminal, browser, and full desktop access, never sharing resources across sessions

- A single command, agent worker start, spins up a worker; a Kubernetes operator handles fleet scaling to thousands of workers automatically

- No inbound firewall changes or VPN tunnels required; workers connect outbound-only via HTTPS to Cursor’s orchestration layer

Regulated enterprises have had one consistent objection to AI coding agents: the code leaves your building. Cursor’s self-hosted cloud agents, generally available as of March 25, 2026, cut that objection off at the root. Your codebase, secrets, and build artifacts stay inside your own infrastructure while Cursor’s cloud handles orchestration, model inference, and the user-facing experience. That split matters more than it sounds.

Most AI coding tools force a binary choice between capability and data control. Self-hosted agents collapse that binary.

Why Regulated Industries Couldn’t Touch Cloud Agents Until Now

Financial services firms, government contractors, and healthcare platforms operate under data residency rules that make standard cloud agent deployments legally problematic, not just uncomfortable. Money Forward, which handles financial data for millions of users across Japan, confirmed this directly: their security requirements made cloud-hosted agent execution a non-starter until this release.

The problem wasn’t agent capability. Cursor’s cloud agents have autonomously written, tested, and pushed production-grade code since early 2026. The gap was execution location: every tool call, every file read, every test run happened on Cursor’s infrastructure. For a fintech handling consumer financial records, that’s not a risk assessment question. It’s a compliance disqualifier.

Self-hosted agents move execution inside the customer’s perimeter while leaving inference and planning with Cursor. And that architectural boundary is exactly what compliance teams need documented.

Claude Just Turned Your Phone Into an AI Command Center While Millions Walked Away From ChatGPT

Claude Just Turned Your Phone Into an AI Command Center While Millions Walked Away From ChatGPT

The Architecture Behind Outbound-Only Connectivity

Here’s the quietly important detail most coverage has missed: no inbound ports open on your network. Zero. The worker process connects outbound via standard HTTPS to Cursor’s cloud, receives tool call instructions, executes them locally, and returns results upstream for the next inference round.

Traditional enterprise software integrations demand firewall rules, VPN tunnels, and network security reviews that can delay deployment by weeks. Cursor’s worker model skips that entire approval chain. Security teams see outbound HTTPS traffic to a known endpoint, which many organizations already permit by default.

Each agent session gets its own dedicated worker. Long-lived workers handle ongoing projects; single-use workers terminate the moment a task completes, leaving no persistent attack surface.

Kubernetes at Scale: What the Helm Chart Actually Delivers

Scaling to a hundred workers is manageable manually. Scaling to thousands is not. Cursor ships a Helm chart and Kubernetes operator that define a WorkerDeployment resource, specifying your desired pool size while the controller manages scaling, rolling updates, and lifecycle events automatically.

This is not a generic container deployment. The operator understands agent session semantics: it knows when a worker is mid-session versus idle, handles graceful teardown without dropping active tasks, and surfaces utilization metrics for capacity planning. For non-Kubernetes environments, a fleet management API exposes the same controls over any infrastructure.

Brex engineers described it plainly: the self-hosted setup now gives agents access to internal test suites and validation tooling that previously required human-in-the-loop execution. That’s the capability difference between an agent that writes code and an agent that confirms the code works.

Same Capabilities, No Capability Tax

One reasonable concern: does self-hosting strip out features? It doesn’t.

Self-hosted agents match the cloud-hosted feature set across four dimensions:

- Isolated VMs: Each session gets a dedicated machine, no cross-session resource sharing

- Agent harness: Composer 2 agentic harness with support for any frontier model

- Plugin support: MCPs, subagents, rules, hooks, and skill extensions all function identically

- Team permissions: Org-level controls over who can initiate and view agent runs

Cursor has committed that demo capabilities, including session videos, screenshots, and logs, are coming to self-hosted agents in a near-term update. Remote desktop takeover, allowing engineers to inspect and redirect an active agent session, is also on the roadmap.

Most reviewers focus on the security story alone, but the operational parity is the more underrated point: you lose nothing by going self-hosted except Cursor’s infrastructure bill.

Where It Falls Short

Self-hosted deployment isn’t frictionless for every team. Organizations without Kubernetes expertise face a real setup curve; the Helm chart simplifies management but doesn’t eliminate the need for someone who understands cluster operations. Cursor provides a fleet management API as a fallback for non-Kubernetes environments, but that path requires custom autoscaling logic rather than a turnkey controller.

The outbound-only architecture is clean, but it does mean agent inference still routes through Cursor’s cloud. Teams operating in air-gapped environments with no outbound internet access cannot currently use this feature. That’s a hard ceiling for certain government and defense deployments where even outbound HTTPS to third-party services is blocked.

How Brex, Money Forward, and Notion Are Already Using It

Real adoption data beats architecture diagrams. Three enterprise customers disclosed their use cases publicly at launch.

Brex is using self-hosted agents to run internal test suites and validate changes with proprietary tooling, delegating end-to-end software builds to agents rather than engineers. Money Forward is building a workflow where roughly 1,000 engineers create pull requests directly from Slack using Cursor agents, a productivity surface that previously required context-switching into the IDE. Notion cited large codebase complexity as the driver: running agent workloads inside their own cloud environment lets agents access internal tools securely while eliminating the need to maintain a parallel agent infrastructure stack.

Three different companies, three different pain points, same underlying solution.

Cursor vs. GitHub Copilot on Enterprise Agent Deployment

| Capability | Cursor Self-Hosted Agents | GitHub Copilot Enterprise |

|---|---|---|

| On-premise code execution | Yes, worker runs inside your network | No, executes on GitHub Actions VMs |

| Inbound firewall changes required | No (outbound HTTPS only) | Network configuration changes required as of February 27, 2026 |

| Kubernetes operator included | Yes, WorkerDeployment CRD | No native equivalent |

| Concurrent agent sessions | Scales to thousands of workers via Helm chart | Multiple concurrent sessions supported; no published cap |

| Video proof of work | Coming soon to self-hosted | Not available |

| IP indemnity | Not confirmed | Yes, Business and Enterprise tiers for unmodified suggestions with filtering enabled |

Frequently Asked Questions (FAQs)

Does self-hosted mean fully air-gapped operation?

No. The worker process requires outbound HTTPS access to Cursor’s cloud for inference and orchestration. Code execution happens inside your infrastructure, but planning runs on Cursor’s servers. Fully air-gapped environments with no outbound internet access aren’t compatible with the current architecture.

Most enterprises need compliance documentation. Does Cursor provide it?

Cursor holds SOC 2 certification, confirmed on its official site. For regulated industries like financial services and healthcare, requesting a data processing agreement directly from Cursor’s enterprise team before deployment is standard practice. Specific artifact availability should be verified with their sales team.

Yes, Kubernetes is mentioned. But what if our team doesn’t use it?

Cursor ships a fleet management API specifically for non-Kubernetes environments. It exposes utilization monitoring and lets teams build custom autoscaling logic against any infrastructure. It demands more engineering effort than the Kubernetes operator path but supports arbitrary deployment targets.

Typically, how many concurrent agent sessions can run per deployment?

No hard per-deployment ceiling appears in Cursor’s documentation. The Kubernetes operator scales to thousands of workers by design, with practical limits determined by your own cluster resources rather than any Cursor-imposed cap.

In 2026, how does Cursor’s self-hosted approach compare to building your own agent infrastructure?

Building proprietary agent infrastructure requires maintaining VM provisioning, model routing, tool execution sandboxing, and UX independently. Teams like Brex and Money Forward specifically cited Cursor’s self-hosted agents as the reason they stopped maintaining parallel systems. You get production-grade orchestration without the infrastructure ownership burden.

For most teams evaluating this, what’s the actual first step?

Enable self-hosted cloud agents through the Cursor Dashboard under the Cloud Agents section, then run agent worker start on a machine inside your network. The Kubernetes operator or fleet API handles scaling from there. Cursor’s documentation at cursor.com/docs/cloud-agent/self-hosted covers the full setup sequence.