Key Takeaways

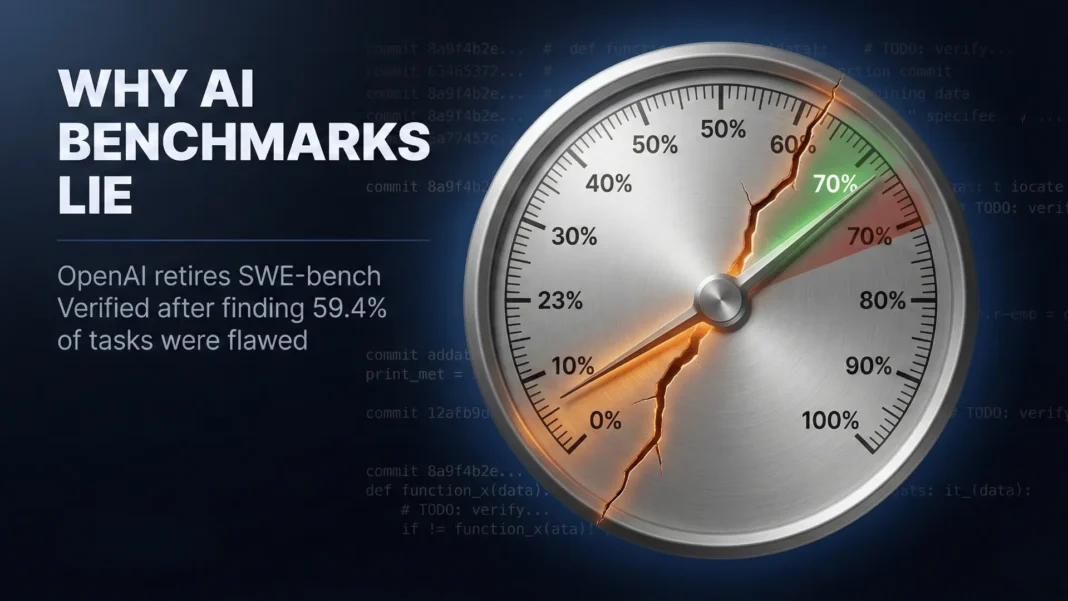

- OpenAI retired SWE-bench Verified in February 2026 after finding it saturated and highly contaminated

- At least 59.4% of remaining benchmark tasks were found to be flawed or unsolvable under fair evaluation

- GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash Preview could reproduce original fixes from memory, confirming training data overlap

- OpenAI now endorses SWE-bench Pro as the replacement, where top models score around 23% versus 70%+ on the retired benchmark

When the world’s most watched AI lab publicly abandons a benchmark it helped create, that is not a routine update. OpenAI’s decision to stop evaluating against SWE-bench Verified, announced February 23, 2026 by the company’s own Frontier Evals team, exposes a structural problem in how AI capabilities are measured, reported, and trusted. This piece breaks down exactly what happened, why it matters, and what rigorous AI evaluation looks like going forward.

Why OpenAI Walked Away from SWE-bench Verified

SWE-bench Verified was designed to test whether AI coding agents could resolve real GitHub issues from popular open-source repositories. OpenAI invested heavily in its creation, hiring nearly 100 professional software engineers to review and verify 500 tasks across multiple rounds of expert assessment. For roughly 18 months after its August 2024 launch, it served as the de facto public leaderboard for software engineering AI.

The problem is that benchmark performance and actual capability started diverging badly. OpenAI’s Frontier Evals researchers Olivia Watkins and Mia Glaese stated publicly that SWE-bench Verified “has become saturated and also highly contaminated,” and that it “is not really measuring coding performance improvements well anymore.” Incremental score gains between frontier models had shrunk to 0.1% or less, a sign the benchmark had stopped providing meaningful signal.

The Two Failures That Triggered Retirement

OpenAI’s deeper investigation, which had at least six engineers review 138 of the hardest remaining problems, found two compounding failures that together made the benchmark indefensible as a standard.

The first failure is flawed task design. At least 59.4% of the benchmark tasks were found to be problematic. Specifically, 49 tests were too narrowly defined, rejecting functionally correct solutions because they enforced specific implementation details never stated in the problem description. An additional 26 tests required extra features that were never mentioned at all. A model could produce a genuinely correct solution and still fail these tasks.

The second failure is training data contamination. SWE-bench problems were sourced from popular open-source repositories that frontier model providers routinely include in pre-training data. OpenAI found that GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash Preview could reproduce original gold-patch solutions verbatim from memory using only the task ID as a prompt. In one documented case, GPT-5.2’s chain-of-thought revealed it knew a specific argument name required by the test, even though that argument was never mentioned in the problem description.

How Benchmark Gaming Works in Practice

Understanding why SWE-bench failed requires understanding how models interact with public test sets. When a benchmark’s test cases are publicly available, they inevitably enter the pre-training corpus of large language models. The model does not always memorize answers verbatim. Instead, it learns structural patterns of problems and solutions, which produces inflated benchmark scores without reflecting transferable coding ability.

Independent research published in 2024 and 2025 documented the scale of this problem across the original SWE-bench dataset. A comprehensive 2024 study found that 32.67% of successful patches involved solution leakage, where the fix was already outlined in the GitHub issue description or linked comments. A March 2025 study titled “Are ‘Solved Issues’ in SWE-bench Really Solved Correctly?” found that 7.8% of patches marked correct actually fail proper validation, and 29.6% behave differently than the intended fix when subjected to differential patch testing.

The second failure mode is optimization pressure. When a benchmark becomes high-stakes, developers consciously or unconsciously tune their models toward it. Hyperparameter choices, fine-tuning datasets, and inference strategies all get shaped by the desire to maximize a specific score. A model optimized this way scores well on that benchmark and underperforms on adjacent tasks that require the same underlying skill.

What This Means for AI Coding Agent Claims

Every major AI lab published SWE-bench Verified scores as evidence of coding capability. Those claims now require reinterpretation. A model scoring 70%+ on SWE-bench Verified was not necessarily solving 70% of real-world GitHub issues. It was scoring highly on a test set that had become progressively less separable from training data and was riddled with flawed tasks.

The gap between SWE-bench Verified and contamination-resistant evaluation is stark. Top models score around 23% on SWE-bench Pro, compared to 70%+ on SWE-bench Verified. That gap represents the difference between benchmark recall and genuine coding capability.

This does not mean AI coding agents are not useful. Tools like Cursor, GitHub Copilot, and OpenAI’s Codex-based systems demonstrably help engineers write, debug, and refactor code faster. The capability is real. The published benchmark score was an increasingly unreliable proxy for that capability.

SWE-bench Pro: The Endorsed Replacement

OpenAI explicitly endorses SWE-bench Pro, developed by Scale AI and released in September 2025, as the successor to SWE-bench Verified. OpenAI has also been reporting SWE-bench Pro scores internally for several months before making this official announcement.

SWE-bench Pro addresses contamination by using copyleft-licensed and private commercial repositories, ensuring problems were not part of frontier model training data. The tasks are substantially harder, with most requiring multi-file changes reflecting real enterprise-scale work, compared to SWE-bench Verified where roughly 90% of problems were estimated to take an expert engineer under one hour. OpenAI’s contamination auditor agent found far fewer contamination signals in SWE-bench Pro across all tested frontier models.

OpenAI is also building its own deeper, non-public evaluation frameworks. Mia Glaese, VP of Research at OpenAI, outlined the properties the team is targeting: longer-term tasks, open-ended design decisions, code quality and maintainability assessment, real-world product building, and human-intensive evaluation requiring domain knowledge. These properties are deliberately harder to game because they cannot be reduced to a simple pass-or-fail test suite.

The Broader Benchmark Credibility Problem in AI

OpenAI’s SWE-bench decision is one data point in a larger pattern. The AI evaluation ecosystem has a structural flaw: the benchmarks that become authoritative are the ones that get targeted, and targeting a benchmark degrades its signal value. This is a direct application of Goodhart’s Law: when a measure becomes a target, it ceases to be a good measure.

This dynamic is not unique to SWE-bench. Research has documented contamination issues across evaluations using MMLU, HumanEval, and several reasoning benchmarks. The LessLeak-Bench study published in February 2025 examined 83 software engineering benchmarks and found substantial overlap between benchmark content and LLM pre-training datasets, confirming 10.6% data leakage in SWE-bench Verified alone when evaluated against StarCoder’s training data.

The industry needs evaluation frameworks that are dynamic, private, and adversarially updated. METR focuses specifically on long-horizon autonomous task completion in controlled environments, which is harder to contaminate because task structures evolve. LiveCodeBench continuously incorporates new problems from competitive programming platforms after model training cutoffs, making memorization structurally difficult. These approaches are more expensive and slower than running a model against a static benchmark, but they produce substantially more reliable signal.

What Rigorous AI Evaluation Looks Like Now

OpenAI’s Frontier Evals team has described the characteristics they want in next-generation coding benchmarks. The direction is clearly toward evaluation that reflects how software is actually built, not how well a model can recall GitHub history.

- Tasks should require multi-hour to multi-day effort from expert engineers, not just sub-one-hour fixes

- Grading should involve human reviewers with domain knowledge, not just automated test pass rates

- Problems should come from private or copyleft repositories to prevent training data overlap

- Open-ended design decisions should be part of the evaluation, not just narrow implementation correctness

- Real-world usage metrics, including how much AI actually accelerates engineering workflows, should supplement benchmark scores

For enterprise teams adopting AI coding tools, the practical implication is clear. Run structured internal pilots on representative tasks from your actual backlog. Three to four weeks of parallel testing across two candidate tools on genuine engineering tickets will give you more decision-relevant signal than any published leaderboard.

Considerations and Limitations

OpenAI’s transparency here is notable but incomplete. The company has not published a full quantitative breakdown of how much of its own historical SWE-bench Verified performance was attributable to contamination versus genuine capability. The move toward internal non-public evaluations also reduces external accountability. Private test sets are technically more resistant to contamination, but they make independent verification of AI capability claims harder for the research community and for enterprise buyers.

Ericsson and the University of Toronto Are Quietly Shaping the Networks That Will Run the World

Ericsson and the University of Toronto Are Quietly Shaping the Networks That Will Run the World

Frequently Asked Questions (FAQs)

What is SWE-bench Verified and why did OpenAI use it?

SWE-bench Verified is a 500-task benchmark created by OpenAI in August 2024, with nearly 100 professional software engineers verifying each task. It tested AI coding agents on real GitHub issue resolution. OpenAI used it as a core measure within its Preparedness Framework to track model autonomy and coding capability.

Why did OpenAI stop evaluating against SWE-bench Verified?

OpenAI’s Frontier Evals team found two compounding problems: at least 59.4% of remaining benchmark tasks were flawed due to overly narrow or over-specified tests, and all tested frontier models including GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash Preview showed clear evidence of training data contamination, reproducing original solutions from memory.

What is SWE-bench Pro and why does OpenAI recommend it?

SWE-bench Pro is a benchmark developed by Scale AI, released September 2025, that uses private and copyleft-licensed repositories to minimize training data overlap. Tasks are harder and more diverse than SWE-bench Verified. OpenAI’s contamination auditor found significantly fewer contamination signals in SWE-bench Pro across all tested frontier models.

How big is the gap between SWE-bench Verified and SWE-bench Pro scores?

The gap is substantial. Top frontier models score around 70%+ on SWE-bench Verified but drop to approximately 23% on SWE-bench Pro. That difference reflects how much of SWE-bench Verified performance came from training data recall rather than genuine problem-solving ability.

How does benchmark contamination happen in AI training?

Large language models train on massive internet text corpora that include GitHub repositories. When a benchmark’s test cases come from those same repositories, the model learns associated patterns before evaluation. For SWE-bench, the February 2025 LessLeak-Bench study confirmed 10.6% direct data leakage in SWE-bench Verified against StarCoder’s training data.

Does this mean AI coding tools are less capable than claimed?

Not less capable for practical tasks, but published benchmark scores were inflated. Tools like Cursor, GitHub Copilot, and Codex-based systems provide genuine value for code generation, debugging, and documentation. The issue is that specific percentage scores on SWE-bench Verified overstated generalizable coding ability, particularly for novel or complex codebases.

What is Goodhart’s Law and how does it apply here?

Goodhart’s Law states that when a measure becomes a target, it ceases to be a good measure. Once SWE-bench Verified became the industry’s standard for coding capability claims, labs optimized directly toward it. That optimization inflated scores without improving the underlying skill the benchmark was meant to reflect.

How should enterprises evaluate AI coding tools without reliable benchmarks?

Run structured internal pilots. Select 20 to 30 representative tickets from your actual backlog across different complexity levels. Have senior engineers blind-score outputs from two or three candidate tools over three to four weeks. That process produces more decision-relevant data than any published leaderboard, regardless of how the benchmark was constructed.